Your daily signal boost from 190,000+ articles, served with a DJ's ear for what actually matters.

So, What Actually Happened?

So, What Actually Happened?

Monday morning, and the bassline that landed over the weekend is unmistakable. Inside 36 hours, South Korea's national growth fund committed 560 billion won to AI unicorn Upstage and the homegrown stack around it, Saudi Arabia's PIF-backed HUMAIN expanded its AWS partnership to launch a named ”global AI operating system” called HUMAIN One, and Chinese courts handed down landmark rulings saying AI cannot be a free-pass justification for mass layoffs. We scanned 190,000 articles this week so you don't have to, and three sovereign-AI playbooks just landed on the same desk in three different jurisdictions. Last week the procurement template was ”named operational AI partner list.” This week the template is who underwrites the operating cost, who carries the labor liability, and which sovereign capital structure backs the stack you bought.

The Bottom Line: Friday belonged to the partner list. Monday belongs to the sovereign-AI ledger. The CIO who walks into Tuesday's operating committee with one named answer across procurement source, labor-liability exposure, and sovereign-capital alignment sets the operating posture for the next four review cycles. Everyone else is going to spend the quarter chasing a map that was redrawn while they were drafting last week's plan.

How Jennifer Aniston’s LolaVie brand grew sales 40% with CTV ads

For its first CTV campaign, Jennifer Aniston’s DTC haircare brand LolaVie had a few non-negotiables. The campaign had to be simple. It had to demonstrate measurable impact. And it had to be full-funnel.

LolaVie used Roku Ads Manager to test and optimize creatives — reaching millions of potential customers at all stages of their purchase journeys. Roku Ads Manager helped the brand convey LolaVie’s playful voice while helping drive omnichannel sales across both ecommerce and retail touchpoints.

The campaign included an Action Ad overlay that let viewers shop directly from their TVs by clicking OK on their Roku remote. This guided them to the website to buy LolaVie products.

Discover how Roku Ads Manager helped LolaVie drive big sales and customer growth with self-serve TV ads.

The DTC beauty category is crowded. To break through, Jennifer Aniston’s brand LolaVie, worked with Roku Ads Manager to easily set up, test, and optimize CTV ad creatives. The campaign helped drive a big lift in sales and customer growth, helping LolaVie break through in the crowded beauty category.

The Tracks That Matter

The Tracks That Matter

1. South Korea Just Wired 560 Billion Won Into Upstage And Named A Sovereign AI Champion, And The ”Buy American” AI Procurement Default Just Got Its First Real Counterweight

The cleanest sovereign-AI signal of the weekend is sitting on a Korean financial-services wire most enterprise procurement decks will skim past. South Korea's national growth fund approved a 560 billion won (roughly $400 million) commitment routed through the Financial Services Commission directly into homegrown AI unicorn Upstage and the broader Korean AI stack around it, with a parallel write-up from Korea JoongAng Daily naming the FSC approval and the strategic positioning of Upstage as the country's named AI champion. Pair it with the US National Geospatial-Intelligence Agency pushing AI adoption as demand grows for ”always-on” intelligence, and the operating thesis lines up. The world's regulated buyers are no longer choosing between American and Chinese AI stacks. They are wiring sovereign capital into named local champions and writing the procurement preference into law and budget at the same time.

The strategic implication is that the procurement scorecard for any multinational enterprise just gained a new column: ”sovereign-capital alignment of the AI vendor we're about to standardize on.” For two years, AI procurement was scored on capability and price. After this weekend, the question is ”if we sign a five-year contract with vendor X, are we exposed in a market where the local sovereign fund just backed vendor Y, and what is our exit cost when the next procurement preference rule lands in Seoul, Riyadh, or Brussels?” The CIO whose 2026 vendor scorecard treats the global hyperscalers as the only viable AI infrastructure layer is reading from a 2024 model. The CIO who builds a sovereign-alignment column into the scorecard, with named local champions per jurisdiction and named contractual exit clauses, will renegotiate Q3 and Q4 contracts from a position of optionality, not lock-in.

The deeper signal is that Korea is not improvising. It is following a template the Saudis, the Emiratis, and the Indians are running in parallel. Expect at least two more named sovereign AI funds to publish a ”homegrown champion” allocation inside twelve months, expect the first wave of Tier-1 European banks to add a ”sovereign-capital alignment” line to their AI vendor risk register by Q3, and expect at least one major American hyperscaler to restructure its enterprise pricing in the affected jurisdictions inside two cycles to defend share. The buyer who already runs a per-jurisdiction sovereign map will negotiate from named evidence. The one who does not will discover the gap in the Q1 2027 audit, when the cost of retrofitting a sovereign-aware vendor strategy is two to three times the cost of building it now.

Here's what works: Before the next vendor-procurement review, ask one named question of the CIO and chief procurement officer together: ”for our top three multi-year AI infrastructure commitments, do we have a named per-jurisdiction sovereign-alignment view, with named local champions per market, named contractual exit clauses, and a named cost-to-switch number?” If the answer is ”we standardize globally,” that is the project. The Korean wire is the trigger; the per-jurisdiction sovereign map is the deliverable. The CIO who ships it first will renegotiate two contracts from optionality. The one who waits will be retrofitting the map under a new procurement preference rule.

2. Saudi PIF-Backed HUMAIN Just Named Its AWS Partnership ”HUMAIN One” And Pitched It As A Global AI Operating System, And The Sovereign-AI-As-A-Service Category Just Got Its First Branded Product

The cleanest hyperscaler-meets-sovereign signal of the weekend is sitting on an Arabian Business wire that most cloud-strategy decks have not yet picked up. HUMAIN, the PIF-backed Saudi AI vehicle, expanded its existing AWS partnership and rebranded the joint output as ”HUMAIN One,” pitching the stack as a global AI operating system rather than a regional cloud overlay. Read it next to the BizTech Magazine recap of Google Cloud Next 2026 framing meaningful impact from agentic AI as the next procurement question, and the new template comes into focus fast. Sovereign capital is no longer just funding regional clouds. It is paying a hyperscaler to co-brand a stack the sovereign can sell into adjacent markets, with the sovereign's name on the operating system layer and the hyperscaler's compute underneath.

The strategic implication is that the cloud-vendor scorecard just got a new bridge category: ”co-branded sovereign AI operating systems with named exit terms and named data-residency commitments.” For a decade, hyperscaler procurement was scored on regional availability, compliance, and price. After HUMAIN One, the question is ”if our AWS workloads sit on a co-branded sovereign stack in Riyadh, who owns the operational data, who carries the model-residency obligation, and what does the contract look like when we expand into the next sovereign market that wants its own co-branded layer?” The chief data officer whose 2026 cloud architecture treats AWS, Azure, and Google Cloud as three flat regions is going to spend Q4 retrofitting sovereign-overlay contracts the procurement team did not see coming. The CDO who builds a ”co-branded sovereign overlay” line into the cloud architecture review now will set the operating template for the next two cycles of regional expansion.

The deeper signal is that ”AI operating system” is being claimed as branded product language by a sovereign vehicle, not by a hyperscaler. That is the inversion. For two years, the OS-of-AI conversation belonged to OpenAI, to Microsoft Copilot, to Salesforce Agentforce. Now a Saudi sovereign vehicle is sitting on a hyperscaler's pipes and stamping its own name on the operating layer. Expect at least two more sovereign vehicles to launch named AI operating systems on hyperscaler infrastructure inside twelve months, expect the first regulated buyer to standardize a sovereign-overlay procurement clause across Tier-1 markets by Q4, and expect the hyperscalers to publish reference architectures for ”sovereign AI overlay” deployments to defend the underlying compute share.

Here's what works: Before the next cloud architecture review, ask the chief data officer and chief information security officer together: ”for our top three regulated markets, do we have a named position on co-branded sovereign AI overlays, with named data-residency commitments, named operational-data ownership terms, and a named exit cost if the sovereign mandates a switch?” If the answer is ”we run on the global hyperscaler,” that is the project. The HUMAIN One announcement is the trigger; the sovereign-overlay procurement clause is the deliverable. The CDO who ships it before Q3 will absorb the next sovereign overlay as a routine cloud architecture update. The one who waits will be writing the clause under a regulator's deadline.

Your Analytics Stack Is One Database Too Many

Pipelines, backfills, sync lag, data drift… that's the cost of splitting your stack. Tiger Cloud extends Postgres, fully managed, so analytics run on live data. No second system. Stay on Postgres. Scale on Postgres.Try Tiger Cloud free.

3. Chinese Courts Just Ruled AI Cannot Be A Free-Pass Justification For Mass Layoffs, And The Labor Liability Wing Of The AI Operating Stack Just Got Its First Real Precedent

The cleanest labor-and-AI signal of the weekend is sitting on a CNBC TV18 wire most HR-tech roadmaps will not pick up before Wednesday. Chinese courts issued landmark rulings stating that AI deployment cannot be cited as a free-pass justification for mass layoffs, with the rulings explicitly requiring employers to demonstrate genuine restructuring need and not simply to invoke automation. Pair it with the Walker Dunlop thesis from the weekend arguing AI is reshaping income durability in commercial real estate by climbing the income ladder and the Diwo decision intelligence framing showing the gap between augmented analytics and decision-shaped output, and a different operating thesis lands. The legal envelope around AI-driven workforce decisions has stopped being a 2027 hypothetical. It is now case law in the world's second-largest economy, and the precedent is going to ripple into adjacent jurisdictions inside two cycles.

The strategic implication is that the chief people officer's 2026 workforce plan just gained an explicit labor-liability column. For two years, ”we redeployed roles because AI improved productivity” was treated as a clean operational narrative. After the Chinese rulings, the question is ”for every named AI-driven role-elimination decision in the last 18 months, do we have documented evidence of genuine restructuring need, named human reviewers in the decision chain, and a defensible audit trail under a labor regulator's named documentation request?” The CPO whose 2026 plan still treats AI-driven restructuring as a productivity story is reading from a 2024 narrative. The CPO who refactors the plan around named decision-trail discipline, named human reviewers per role decision, and named labor-liability exposure per market will absorb the next 18 months of cross-border labor scrutiny as routine operating updates.

The deeper signal is that the labor wing of the AI operating stack is converging with the data-security wing, the procurement wing, and the underwriting wing. The named decision-trail discipline that JPMorgan executives flagged for AI-influenced financial decisions, the named third pillar that Cyberhaven flagged for AI consumption of regulated data, and the named labor-liability documentation that the Chinese courts have just made operational are all the same operating muscle: a documented chain of human accountability behind every consequential AI-influenced decision. Expect at least two large EU labor regulators to issue similar rulings or guidance inside twelve months, expect the first wave of multinational employers to add a ”labor-liability per market” line to the AI workforce dashboard by Q3, and expect at least one major US class action filing on AI-driven layoffs to land in federal court inside two cycles.

Here's what works: Before the next workforce planning review, ask the CPO and general counsel together: ”for every AI-driven role-elimination decision in the last 18 months, do we have a named human reviewer in the decision chain, a documented restructuring rationale, and a defensible audit trail under a labor regulator's documentation request, named per market?” If the honest answer is ”we have a productivity narrative,” that is the project. The Chinese rulings are the trigger; the named labor-liability audit trail is the deliverable. The CPO who ships it first will close the next cross-border restructuring as a routine operating event. The one who does not will be writing the audit trail in a courtroom filing.

4. NetApp Just Pitched Deeper Google Cloud AI Integration As A Game Changer, And The Storage Tier Of The AI Operating Stack Just Walked Onto The Architecture Review

The single most under-covered architectural signal of the weekend is sitting on a Simply Wall Street note that most CIO decks will not surface before the Q3 architecture review. NetApp's deeper Google Cloud AI integration is being framed by analysts as a game changer, with the explicit pitch that the storage tier (not the model tier) is the place AI workloads either scale or stall. Read it next to the Google Cloud Next 2026 framing on deriving meaningful impact from agentic AI, and the operational picture sharpens. The conversation about ”where AI scaling actually breaks” has moved from compute and model accuracy to the unsexy infrastructure layer underneath: storage architecture, data movement cost, and the latency between the data lake and the inference layer.

The strategic implication is that the chief data officer's 2026 architecture map just gained a new line item: ”named storage-tier strategy for the AI workload pipeline.” For five years, AI architecture was discussed in compute and model terms. After the NetApp framing, the question is ”for our top three production AI workloads, do we have a named storage tier with named data-movement costs, named latency budgets between training and inference, and a named exit path if the storage vendor and the model vendor diverge on roadmap?” The CDO whose 2026 architecture review still treats storage as a commodity layer underneath the compute decision is reading from a 2022 model. The CDO who builds a named storage-tier strategy with named operational metrics will absorb the next round of AI scaling questions from the CFO as a routine architectural update.

The deeper signal is that the unsexy parts of the AI operating stack are quietly being reframed as load-bearing walls. Storage, data lineage, audit trail, labor liability, sovereign overlay: each one is the kind of ”boring” architectural decision that does not show up in the AI vendor leaderboard but determines whether the AI portfolio scales without breaking the operating model. The CIO who reads the trade press of these adjacent layers (data infrastructure, regulated-buyer architecture, labor-and-employment law) is reading next quarter's architecture variance commentary before it is written.

Here's what works: Before the next architecture review, ask the chief data officer and head of platform engineering together: ”for our top three production AI workloads, do we have a named storage-tier strategy, named data-movement cost per workload, named latency budgets between training and inference, and a named exit path if the storage vendor's roadmap diverges from the model vendor's roadmap?” If the answer is ”storage is an AWS or Azure decision,” that is the project. The NetApp framing is the trigger; the named storage-tier strategy is the deliverable. The CDO who ships it before Q3 will absorb the next AI scaling question without retrofitting the architecture under a CFO budget challenge.

10x the context. Half the time.

Speak your prompts into ChatGPT or Claude and get detailed, paste-ready input that actually gives you useful output. Wispr Flow captures what you'd cut when typing. Free on Mac, Windows, and iPhone.

5. Decision Intelligence Just Got A Named Vocabulary And A Named Vendor Map, And Augmented Analytics Quietly Got A Ceiling

The cleanest analytics-architecture signal of the weekend is sitting on a Diwo knowledge-base release that most BI decks will not surface. Diwo published a long-form, citation-friendly explainer of Decision Intelligence as a Gartner-defined category that produces decisions, not insights, with a named four-element framework (action plus impact plus validation plus execution), a named six-stage decision loop, and a named vendor map distinguishing Decision Intelligence from the Augmented Analytics layer. The argument names the ceiling on Augmented Analytics: it stops short of decision-shaped output. Conversational interfaces (NL-to-SQL, LLM reasoning, multi-agent governance) are necessary but not sufficient. The next operating layer is ”Decision Flows” that turn recurring questions into one-click decisions with named accountability, named guardrails, and named retention.

The strategic implication is that the analytics roadmap for any data team that bought into Augmented Analytics in 2023 now needs a named ”Decision Intelligence overlay” line. For three years, the BI conversation was about replacing dashboards with natural-language interfaces. After this framing, the question is ”for our top five recurring strategic questions, do we have a Decision Flow with a named owner, named action triggered, named validation gate, and named execution accountability, or do we still have a dashboard and a meeting?” The chief analytics officer whose 2026 plan still leads with ”expanding Augmented Analytics adoption” is reading from a 2023 ceiling. The CAO who builds a named Decision Intelligence overlay on top will move two cycles cleaner than peers still chasing Augmented Analytics seat counts.

The contrarian read is that the bridge concept of the day, ”critical thinking” appearing across Defense Technology, AI, AI-and-Cryptocurrency, AI Research, and Data Centers in five different articles in a single window, is the same operating muscle. Decision Intelligence, named decision-trail discipline, second-order thinking, named human reviewers: every one of these is the load-bearing wall under the next regulator examination, the next labor-liability ruling, and the next AI procurement audit.

Here's what works: Before the next analytics-and-AI committee, ask the chief analytics officer and chief data officer together: ”for our top five recurring strategic questions, do we have a named Decision Flow with a named owner, named action triggered, named validation gate, and named execution accountability, or are we still shipping a dashboard and a meeting?” If the answer is the second one, that is the project. The Decision Intelligence framing is the trigger; the named Decision Flow inventory is the deliverable. The CAO who ships it first runs Q4 from named operating evidence. The one who does not will be defending Augmented Analytics seat counts in a meeting that has already moved on.

6. AI Just Cracked One Of Mathematics' Hardest Open Problems, And The ”Reasoning Plateau” Frame Just Lost A Round

The single most under-covered research signal of the weekend is sitting on a EurekAlert release most enterprise leaders will skim past as ”academic news.” A new AI method directly tackled one of science's hardest open mathematical problems, demonstrating reasoning capability on a benchmark that had resisted progress for years. Pair it with the Quandary CG argument that second-order thinking is the difference between AI that works and AI that fails and the Substack synthesis arguing that as AI accuracy increases, humans may become more dependent and less skeptical, and a different operating thesis lands. The ”reasoning plateau” frame that has been comforting boards for two quarters is wobbling. The capability frontier just moved on a benchmark that was being used as a proxy for ”real reasoning will take five more years.”

The strategic implication is that the AI capability slide in every C-suite deck just got a new line. For 18 months, the calming message was ”current models are pattern matchers, the reasoning gap is a five-year problem, plan accordingly.” After this weekend's research event, the question is ”if a model can crack a previously unsolved mathematical benchmark, what does our 24-month capability plan look like, and which of our 2026 productivity assumptions are about to be repriced?” The CTO who walks into the next strategy review still leading with the reasoning-plateau frame is reading from a Q4 2025 narrative. The CTO who refactors the capability slide around ”reasoning capability moves in step changes, not plateaus” with named monitoring of new benchmark-cracking events will absorb the next 18 months of capability surprises as routine planning updates.

The deeper signal is that this is the second compounding research event in the last six weeks. Each one shortens the operational planning horizon by another quarter. Expect at least three more named ”AI cracks a previously unsolved domain” headlines inside twelve months, expect the first wave of strategy consultants to start pricing capability uncertainty into the next round of multi-year AI roadmaps by Q3, and expect at least one major industry analyst house to publish a named ”reasoning step-change” framework before Q4.

Here's what works: Before the next strategy review, ask the CTO and chief AI officer together: ”if a 24-month capability assumption gets disproved by a single research event, what is our explicit replan trigger, named monitoring source, and named decision authority to update the capability slide inside one cycle?” If the answer is ”we will revisit at the next quarterly review,” that is the project. The math breakthrough is the trigger; the named capability replan protocol is the deliverable. The CTO who ships it first will absorb the next reasoning surprise without burning a strategy cycle. The one who waits will defend last quarter's plan to a board that already read the headlines.

7. ISO 42001 Just Got A ”Evidence Your Auditor Will Actually Accept” Playbook, And The AI Compliance Documentation Cycle Just Got A Reference Standard

The cleanest AI compliance signal of the weekend is sitting on an ICME documentation page most CISOs will not see until the next audit cycle. ICME published a sharp guide to ISO 42001 enforcement evidence, named explicitly as ”the evidence your auditor will actually accept,” with a documented mapping of policy to implementation to monitoring artifact. Pair it with the Morningstar argument that private credit isn't safer than banks, it's just better at hiding losses, and the operating template sharpens. ISO 42001 is being repositioned from ”an aspirational AI governance certificate” into ”the named evidence chain that survives an actual auditor walkthrough.” The firms still treating it as a marketing line on the trust page are going to discover the gap when the first regulated-buyer procurement RFP demands the evidence chain on a 30-day timeline.

The strategic implication is that the AI governance program for any regulated buyer just gained a named documentation deliverable. For 18 months, AI governance was an internal-policy exercise. After the ICME framing, the question is ”for our ISO 42001 control set, do we have named evidence per control, named owner per evidence artifact, named refresh cadence, and a named retention period that survives an external auditor's documentation request?” The CISO whose program treats ISO 42001 as a one-time certification is going to spend Q4 retrofitting the evidence chain. The CISO who builds the named evidence inventory now, with named owners and named refresh cadence, will absorb the next regulated-buyer RFP as a routine sales enablement event.

The deeper signal is that this closes the audit-trail loop that runs through every other story this week. Sovereign AI procurement, labor-liability documentation, storage-tier accountability, decision-flow ownership, capability-replan protocols, and ISO 42001 evidence: each one is a documented chain of named accountability behind a consequential AI decision. Expect at least three Big-Four firms to publish named ISO 42001 evidence playbooks inside twelve months, expect the first wave of Tier-1 enterprises to add an ”ISO 42001 evidence freshness” line to the quarterly governance dashboard by Q3, and expect at least one major procurement RFP to name a 30-day ISO 42001 evidence-chain delivery as a default requirement before Q4.

Here's what works: Before the next AI governance committee, ask the CISO and chief compliance officer together: ”for our ISO 42001 control set, do we have a named evidence artifact per control, named owner per artifact, named refresh cadence, and a named retention period, and does the chain survive an external auditor walkthrough on a 30-day timeline?” If the honest answer is ”we have a policy document,” that is the project. The ICME framing is the trigger; the named evidence inventory is the deliverable. The CISO who ships it first turns the next regulated-buyer RFP into a routine sales enablement event. The one who does not will be writing the evidence chain under audit deadline pressure.

Signal vs. Noise

Signal vs. Noise

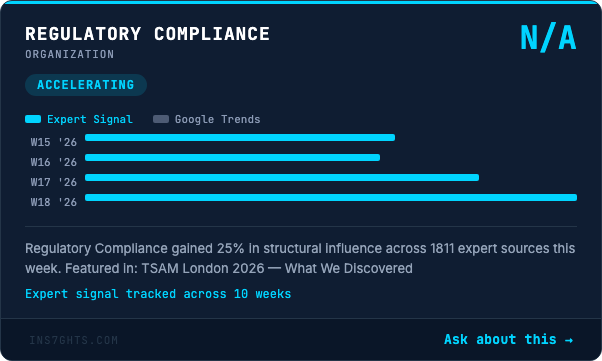

🟢 Signal: Data Management structural influence climbed 45 percent on a 280-article base, Machine Learning influence rose 27 percent on a 398-article base, and Data Analysis gained 24 percent of real influence on a 210-article base. Read those numbers as one shape and the Monday-morning operating frame becomes obvious. The conversation has rotated from the model-and-application layer (where two years of headline AI noise has lived) into the load-bearing layer underneath: the storage tier the NetApp story names, the data-management discipline the Korean and Saudi sovereign moves require, and the analytical-decision discipline the Diwo framing makes explicit. Real-world influence rising at the data-management layer while raw mention volume mildly cools means the operational center of gravity has moved. The CDO who walks into Tuesday's review with the named storage-tier strategy, the named data-management owner, and the named decision-flow inventory moves two cycles cleaner than the CDO still framing AI as a model-selection question.

🔴 Noise: Data Analytics still pulled 319 mentions but lost 29 percent of structural influence, Generative AI pulled 284 mentions while shedding 19 percent of real influence, Data Governance pulled 252 mentions while losing 27 percent, and Cybersecurity pulled 242 mentions while losing 15 percent. Each of those four labels is still attached to a flood of vendor announcements; the operational conversation has moved past them as undifferentiated headers. ”Data Analytics” has been replaced by sharper categories: storage-tier strategy, decision-flow inventory, sovereign-overlay procurement. ”Generative AI” has fragmented into named operator categories: agentic AI orchestration, AI operating systems, capability-replan protocols. ”Data Governance” has fragmented into ISO 42001 evidence chains, labor-liability documentation, named decision-trail discipline. ”Cybersecurity” has fragmented into the third pillar of data security, named consumption logs, and AI agent registries. Procurement intake filters keyword-screening on the legacy generic terms are filtering for vendor marketing, not for buyer signal. Rebuild the filter around the named operating categories and inbound vendor relevance roughly doubles inside two months.

From the 190K

From the 190K

We scanned 190,000 articles this week. Here's what no one is talking about:

The pattern of the day is that ”sovereign AI” has stopped being a Brussels-or-Beijing geopolitics conversation and quietly become a finance-committee line item, with three different sovereign capital structures (Korean state fund, Saudi PIF, Chinese court precedent) all rewriting the same operating assumption inside 36 hours, while almost no multinational vendor has updated its market map.

Watch the desks separately and you would call this three unrelated stories. Korea is processing a 560 billion won state-fund commitment to a homegrown AI champion. Saudi Arabia is processing a co-branded sovereign AI operating system on AWS infrastructure. China is processing landmark labor-court rulings on AI-driven layoffs. Read them as one substrate and the picture sharpens fast. Three different sovereign capital structures, in three different jurisdictions, in three different operating dimensions, all renegotiated the multinational vendor's pricing power, exit cost, and labor liability inside the same weekend. The strategic conversation in Tier-1 boardrooms is still framed as ”buy American or buy Chinese.” The actual operating frontier is ”negotiate the per-jurisdiction sovereign overlay, the per-jurisdiction labor liability, and the per-jurisdiction co-branded operating layer before the procurement RFP locks the contract.”

The operational implication is that the 2026 multinational AI procurement cycle will be won by the firm that consolidates these three conversations into one named ”Sovereign-AI Exposure Map,” with one integrated owner, one quarterly cadence, and one integrated dashboard covering per-jurisdiction sovereign capital alignment, per-jurisdiction labor-liability documentation, per-jurisdiction co-branded operating layer, and per-jurisdiction exit cost. The firms that let the three conversations run in parallel will discover the duplication in the Q4 audit, when the cost of consolidating after the fact is two to three times the cost of consolidating before. The firms that consolidate now will run multinational AI portfolios with a single named owner, fewer surprise variances, and a real signature on every consequential procurement decision when the first regulator examination lands.

🔍 Below the surface: Here's how you spot real infrastructure: 280 articles cite Data Management with rising structural influence, 398 cite Machine Learning the same way, and the operating frame quietly shifting both is the move from ”tooling” to ”named operating discipline with named owners.” That shift does not show up in any vendor leaderboard. It shows up in the integration patterns, the role redefinitions, and the procurement vocabulary. The trade publications pulling these threads together (the Korean financial-services press, the Gulf business press, the Chinese labor-court reporters, the storage-architecture analysts, and the AI compliance-evidence press) are running a quarter ahead of the Tier-1 analyst houses, which are running two quarters ahead of operating-committee dashboards. The firms that read the trade press of the operating function adjacent to their own are reading next quarter's variance commentary before it is written.

By The Numbers

By The Numbers

- South Korea's national growth fund committed 560 billion won to homegrown AI unicorn Upstage and the Korean AI stack around it — The cleanest single-line reframe of the multinational AI procurement conversation in months. Drop it on the next vendor scorecard review and the ”we standardize globally” assumption reframes itself in 30 seconds.

- Chinese courts handed down landmark rulings stating AI cannot be a free-pass justification for mass layoffs — The first labor-court precedent that names AI-driven workforce decisions as requiring documented restructuring rationale, not just a productivity narrative. Every multinational labor playbook now needs a named per-market labor-liability column.

- Saudi PIF-backed HUMAIN expanded its AWS partnership and rebranded the joint stack as ”HUMAIN One,” a global AI operating system — The first co-branded sovereign-AI-as-a-service product to claim the operating-system layer over a hyperscaler's compute. Cloud architecture reviews missing a named sovereign-overlay clause are operating from a 2024 contract template.

- Data Management structural influence climbed 45 percent week over week and Machine Learning climbed 27 percent, while Data Analytics shed 29 percent and Generative AI shed 19 percent — The signature of categories that have crossed from undifferentiated header into named operating language. Procurement filters still keyword-screening on the legacy generic terms are filtering for vendor marketing, not buyer signal.

- The bridge concept ”critical thinking” appeared across Defense Technology, Artificial Intelligence, AI-and-Cryptocurrency, AI Research, and Data Centers in the same window — The cleanest leading indicator that the operating frame inside enterprise AI has rotated from ”tool ownership” to ”named decision discipline across operator stacks.” The CTO whose dashboard still leads with ”AI initiatives” as a single bucket is two cycles behind operator-grade peers.

- A new AI method directly cracked one of mathematics' hardest open problems on a benchmark that had resisted progress for years — The second compounding ”reasoning step-change” research event in six weeks. Strategy reviews still leading with a ”reasoning plateau” frame are reading from a Q4 2025 narrative.

- Private credit is not safer than banks, it is just better at hiding losses, with rising AI-driven labor displacement adding to the durability question on income-generating assets — The unsexy financial-stability read of the week is also the most operationally relevant: the AI displacement story now has a named exposure on private-credit balance sheets. Most 2026 underwriting reviews do not yet name it.

- NetApp's deeper Google Cloud AI integration is being analyst-framed as a game changer because the storage tier is where AI workloads scale or stall — The unsexy news of the week is also the most operationally relevant: storage architecture is the load-bearing wall most 2026 AI architecture reviews still treat as a commodity layer.

Deep Dive: The Sovereign-AI Operating Stack Just Walked Onto The Enterprise Architecture Review, And Most Multinationals Are Reading From A Pre-Sovereign Template

Deep Dive: The Sovereign-AI Operating Stack Just Walked Onto The Enterprise Architecture Review, And Most Multinationals Are Reading From A Pre-Sovereign Template

Every DJ who has ever played a long international tour knows the moment when the same set lands differently in three different cities. The vinyl is the same, the order is the same, the BPM is the same, but the dancefloor in Seoul wants a different drop than the dancefloor in Riyadh, and the dancefloor in Shanghai is moving to a frequency that did not exist on the original cut. That is exactly what the weekend told us about AI procurement. The model is the same. The vendor is the same. The price is the same. But the operating context changed in three different cities at the same time, and the multinationals still spinning the unified global set are about to play to three half-empty floors on the same Monday morning.

The Sovereign-Capital Side Of The Stack

The Korean wire is the bass drop. A 560 billion won state-fund commitment to a named homegrown AI champion is the signal that the era of ”buy American by default” is over in the markets that matter most for multinational expansion. The CIO whose 2026 vendor scorecard still treats global hyperscalers as the only viable AI infrastructure layer is reading from a 2024 narrative. The CIO who builds a per-jurisdiction sovereign-alignment column into the scorecard, with named local champions per market and named contractual exit clauses, will renegotiate Q3 contracts from optionality, not lock-in.

The Co-Branded Overlay Side Of The Stack

The HUMAIN One announcement is the snare. The pitch is not ”regional cloud.” The pitch is a named global AI operating system co-branded by a sovereign vehicle on top of a hyperscaler's compute. The CDO who walks into the next architecture review with a named position on co-branded sovereign overlays, named data-residency commitments, and named operational-data ownership terms, is the CDO who absorbs the next sovereign overlay as a routine cloud architecture update. The CDO who keeps treating cloud procurement as a flat regional decision will spend Q4 retrofitting overlay contracts under a regulator deadline.

The Labor-Liability Side Of The Stack

The Chinese court rulings are the hi-hat. The track runs underneath every other section of the night. Take it out, keep treating AI-driven role eliminations as a clean productivity narrative, and the entire workforce-strategy posture starts to drift. The CPO who builds a named labor-liability documentation chain per market, with named human reviewers in the decision chain and named restructuring rationale per role decision, is the CPO who closes the next cross-border restructuring as a routine operating event. The CPO who keeps the conversation in a productivity slide will be reading the next labor-court ruling in a courtroom filing.

The Capability-And-Compliance Side Of The Stack

The reasoning step-change at the math benchmark and the ISO 42001 evidence playbook are the operating muscle's vocal hook. The line is unmistakable: the capability frontier moves in step changes, not plateaus, and the compliance evidence chain has to refresh on a named cadence to survive the next external audit walkthrough. The CTO who refactors the capability slide around ”step changes, not plateaus,” with a named replan protocol, and the CISO who refactors the AI governance program around named ISO 42001 evidence with named owners and named refresh cadence, are the two roles that absorb the next 18 months of capability and compliance pressure as routine operating updates.

What Actually Works

- Stand up a Sovereign-AI Exposure Map with one named owner. CIO, CFO, CDO, CPO, and general counsel co-sign. One integrated dashboard covering per-jurisdiction sovereign capital alignment, per-jurisdiction labor-liability documentation, per-jurisdiction co-branded operating layer, and per-jurisdiction exit cost. Refreshed monthly. Without it, the three sovereign conversations land separately and contradict each other.

- Refactor the AI vendor scorecard around per-jurisdiction sovereign-alignment columns. Every multi-year AI infrastructure commitment gets one named local champion alternative per market, one named contractual exit clause, and one named cost-to-switch number. Single global standardization is the 2024 assumption.

- Build the named labor-liability documentation chain per market. Every AI-driven role-elimination decision gets a named human reviewer, a documented restructuring rationale, and a defensible audit trail. The Chinese rulings named the question; the named documentation chain is the project.

- Ship the named ISO 42001 evidence inventory and the named capability-replan protocol. Every governance control gets a named evidence artifact, a named owner, and a named refresh cadence. The capability slide gets a named replan trigger, named monitoring source, and named decision authority. Quarterly cadence.

The set list is changing because the underlying sovereign-capital line is real. The DJ who keeps spinning the unified global set (one vendor, one price, one jurisdiction-agnostic AI strategy) to a room that has already split into three different sovereign frequencies is going to lose the multinational booking. The DJ who hears the bassline of the sovereign shift, names the line items, and mixes a different verse for each city, is the one whose Monday morning calendar fills up. The unified global set is the support act now. Mix it for the three frequencies the rooms are already moving to.

What's Coming

What's Coming

The First Tier-1 European Bank To Add A Sovereign-Alignment Column To Its AI Vendor Risk Register

The Korean and Saudi sovereign-capital moves carried into this weekend through three different jurisdictions in 36 hours. The next move is the first US or European Tier-1 bank to publish an updated AI vendor risk register that names a ”sovereign-capital alignment” column with named per-jurisdiction local champions. That update is probably one to two quarters out. The CIOs who have already drafted the column will fold the register update in cleanly. The CIOs that have not will be writing the column while the regulator's next examination cycle starts.

The First Multinational Employer To Publish A Per-Market AI Labor-Liability Documentation Standard

The Chinese court rulings are the trigger. The next move is the first multinational employer to publish a named per-market AI labor-liability documentation standard, with named human reviewers per decision, named restructuring rationale per role, and named retention periods. That announcement is probably one quarter out. The CPOs who have already drafted the standard will read the public version with the work already done. The ones that have not will spend the next quarter writing the standard under regulator pressure rather than editorial choice.

The First Big-Four Advisory Firm To Publish A Named ISO 42001 Evidence Chain Reference Architecture

The ICME documentation is the trigger. The next move is the first Big-Four advisory firm to publish a named ISO 42001 evidence chain reference architecture, with named evidence artifacts, named owners, and named refresh cadence. That announcement is probably one quarter out. The CISOs who have already drafted the inventory will absorb the reference architecture as routine governance enablement. The ones that have not will be writing the inventory under audit deadline pressure.

For Your Team

For Your Team

Strategic purpose: Monday is the day this week's signals get translated into a single integrated Sovereign-AI Exposure Map before Tuesday's operating committee. The work today is not another briefing. It is the conversation that names one signature line across per-jurisdiction sovereign capital alignment, per-jurisdiction labor-liability documentation, per-jurisdiction co-branded operating layer, and per-jurisdiction exit cost. Everything else is commentary.

Tuesday's meeting prompt: ”If South Korea just wired 560 billion won into a homegrown AI champion, Saudi Arabia just rebranded an AWS partnership as a global AI operating system, and Chinese courts just ruled AI cannot be a free-pass justification for mass layoffs in the same weekend, who in this room owns the named one-page Sovereign-AI Exposure Map across our top three multinational markets, and is that owner one person or five?”

The Sovereign-AI Exposure Map Framework:

- One named owner across four lines. CIO, CFO, CDO, CPO, and general counsel co-sign one exposure map. One page, one cadence, one dashboard. If the four accountability conversations land on separate desks with separate owners, the framework is not real.

- Named per-jurisdiction sovereign-alignment column on the AI vendor scorecard. Every multi-year AI infrastructure commitment gets one named local champion per market, one named contractual exit clause, and one named cost-to-switch number. Single global standardization is the 2024 assumption.

- Named labor-liability documentation chain per market. Every AI-driven role-elimination decision gets a named human reviewer, a documented restructuring rationale, and a defensible audit trail. The Chinese rulings named the question; the named documentation chain is the project.

- Named co-branded sovereign-overlay procurement clause across cloud contracts. Every regulated-market cloud contract gets a named position on co-branded sovereign overlays, named data-residency commitments, and named operational-data ownership terms. The HUMAIN One announcement named the category; the named clause is the deliverable.

- Named ISO 42001 evidence inventory with named owners and named refresh cadence. Every governance control gets a named evidence artifact, a named owner, and a named refresh cadence that survives an external auditor walkthrough on a 30-day timeline. The ICME framing named the gap; the named inventory is the project.

Share-worthy stat: South Korea wired 560 billion won into homegrown AI unicorn Upstage in a single state-fund decision the same weekend Saudi Arabia rebranded its AWS partnership as a global AI operating system and Chinese courts ruled AI cannot justify mass layoffs. Drop all three on the next vendor scorecard review and the ”we standardize globally” assumption reframes itself in 30 seconds.

Go deeper: Track the sovereign-AI signals in real time →

The Track of the Day

The Track of the Day

”AI will free you for the hard work of figuring out what actually matters. You don't have to memorize everything anymore, but you still have to do the hard part: deciding what matters. That's on you. Let's make sure you're really good at it.”

— Cassie Kozyrkov

Today's set: ”Heroes” by David Bowie, mixed into ”Around the World” by Daft Punk. Bowie named the moment that walked into every multinational operating committee on Monday morning, the moment when ”we can be heroes, just for one day” stops being a poetic line and starts being an operating posture under three different sovereign frequencies on the same dancefloor. Daft Punk named the answer: the work today is around the world, around the world, the same loop in three different cities, with the discipline to mix a different verse for each one. A Korean state fund wiring 560 billion won into a named local AI champion. A Saudi PIF vehicle stamping its own brand on a hyperscaler's operating layer. Chinese courts redrawing the labor-liability map for AI-driven workforce decisions. The DJ who keeps spinning the unified global set is going to play last quarter's vinyl to a room that has already split into three sovereign frequencies. The DJ who hears the bassline of the sovereign shift, names the per-jurisdiction line items, and mixes a different verse for each city, is the one whose Tuesday morning meeting books the rest of the quarter. Everybody else is still trying to find the headliner on a USB the room has already stopped asking for.

Yves Mulkers, your data DJ, mixing 190,000 articles into the tracks that actually matter.

We scanned 190,000 articles this week so you don't have to. Data Pains → Business Gains.

Published: May 4, 2026 | Curated by Yves Mulkers @ Ins7ghts

1,300+ articles scanned. 7 stories selected. Our AI distills the noise into signal—in seconds. Get early access →

Know someone who'd find this useful? Share your unique referral link →

Want Your Own AI Intelligence Briefing?

Our platform analyzes 1,000+ sources daily and delivers personalized insights in seconds.

Join the Waitlist →Founding members: Lifetime discount • Priority access • Shape the product