Your daily signal boost from 190,000+ articles, served with a DJ's ear for what actually matters.

So, What Actually Happened?

So, What Actually Happened?

Tuesday morning, and the bassline that landed overnight is unmistakable. Inside 18 hours, SAP filed an intent to acquire data lakehouse vendor Dremio, Pixxel and Sarvam announced an AI-powered orbital data centre for India, Zyphra and AMD launched a named alternative-GPU AI cloud on MI355X silicon, and Blaize and Winmate inked a $15 million edge-AI partnership for defense, border, and maritime deployments. We scanned 190,000 articles this week so you don't have to, and the AI procurement scorecard that worked on Friday just gained four new line items: data-platform layer, sovereign space-compute layer, alternative-GPU cloud layer, and hardened edge-AI layer. Yesterday the conversation was sovereign capital. Today it is the discovery that the stack itself just split into named floors.

The Bottom Line: Monday wrote the sovereign ledger. Tuesday names the new floors of the building. The CIO who walks into Wednesday's architecture review with one named owner per layer (data, compute, edge, search-representation) sets the procurement template for the next four review cycles. Everyone else is buying floor plans for a building that already grew six stories.

The World's Biggest Dev Event Hits Silicon Valley

WeAreDevelopers World Congress comes to San José, CA — September 23–25, 2026. 10,000+ developers, 500+ speakers, and the full software development lifecycle under one roof, in the heart of Silicon Valley.

Kelsey Hightower. Thomas Dohmke (fmr. CEO, GitHub). Christine Yen (CEO, Honeycomb). Mathias Biilmann (CEO, Netlify). Olivier Pomel (CEO, Datadog). The people actually building the tools you use every day — all on one stage.

AI, cloud, DevOps, security, architecture, and everything real builders ship with. Workshops, masterclasses, and the official congress party.

The Tracks That Matter

The Tracks That Matter

1. SAP Just Moved To Acquire Dremio, And The Open Lakehouse Just Got Folded Into A Named Enterprise Operating Stack

The cleanest data-infrastructure signal of the week is sitting on a Dremio corporate blog most CDO decks will see only after the next architecture review. SAP confirmed its intent to acquire data lakehouse vendor Dremio, folding open-format Iceberg compute, semantic-layer queries, and federated lakehouse access directly under SAP Business Data Cloud. Pair it with the Google Cloud Gemini Enterprise Agent Platform launch and a $750 million partner innovation fund, and a clean architectural shape lands. The era when ”data lakehouse” was a neutral, vendor-agnostic primitive is closing. The lakehouse is being absorbed into named enterprise operating stacks, and the buyer is now choosing whose operating system runs the analytical layer of the company.

The strategic implication is that the chief data officer's 2026 platform map just gained a new line: ”named lakehouse-to-application-stack alignment per workload domain.” For three years, lakehouse procurement was a ”best storage and query engine for the price” decision. After this acquisition, the question becomes ”for our top three analytical domains, do we have a named lakehouse-to-application-stack alignment, with a named data-portability cost, a named exit clause if the acquirer's roadmap diverges from our application choice, and a named decision-rights owner when SAP, Oracle, Salesforce, and Databricks each absorb a layer of the open-format ecosystem?” The CDO whose platform map still treats lakehouse as a horizontal, vendor-neutral utility is reading from a 2023 narrative. The CDO who builds a named stack-alignment column into the platform review will absorb the next three lakehouse acquisitions as routine architectural updates instead of fire drills.

The deeper signal is that consolidation in the analytical layer is mirroring what already happened in the application layer a decade ago. Open-format storage and Iceberg-based query did not stay neutral, and they were never going to. The acquirers with the deepest pockets and the most existing enterprise contracts are now writing the operating-system layer over the open formats. The CIOs who treated open-format as a permanent moat are about to discover that open-format was the runway, not the destination, and the destination is a named enterprise stack with a named owner sitting on top of it.

Here's what works: Before the next platform review, ask the chief data officer and head of analytics together: ”for our top three analytical domains, do we have a named lakehouse-to-application-stack alignment, a named data-portability cost, a named exit clause, and a named decision-rights owner if our preferred lakehouse gets absorbed into a stack we did not standardize on?” If the answer is ”we standardized on the open format,” that is the project. The Dremio acquisition is the trigger; the named stack-alignment matrix is the deliverable. The CDO who ships it before Q3 will reframe the next two acquisitions as routine architectural updates instead of contract-rewriting emergencies.

2. Pixxel And Sarvam Just Pitched An AI-Powered Orbital Data Centre For India, And The Sovereign-AI Stack Just Added A Space Layer

The single most under-covered architectural signal of the day is sitting on an Indian education-technology wire most enterprise decks will skim past. Pixxel and Sarvam announced a partnership to launch an AI-powered orbital data centre for India, putting both inference compute and Earth-observation processing on satellites rather than ground-based hyperscaler infrastructure. Read it next to yesterday's sovereign-capital wire from Korea and the HUMAIN One announcement from the Gulf, and the operating thesis sharpens fast. The sovereign-AI stack is no longer a procurement category. It is an architectural category, and ”where the inference happens” is now a sovereignty question alongside ”who owns the data.”

The strategic implication is that the chief data officer's data-residency map just gained a vertical axis. For five years, data residency meant ground regions. Frankfurt, Mumbai, São Paulo, Singapore. After Pixxel-Sarvam, the question is ”for our top three regulated analytical workloads, do we have a named position on orbital and edge inference, with named data-residency commitments, named latency budgets between satellite, edge, and ground, and a named exit path if a regulated customer mandates that inference happens off the public hyperscaler grid entirely?” The CDO whose 2026 architecture review treats inference as something that happens in three named cloud regions on the ground is reading from a 2024 model. The CDO who refactors the architecture review around named ground-edge-orbital tiering will absorb the next regulated-buyer RFP that mandates non-hyperscaler inference as routine cloud architecture work.

The deeper signal is that two of the heaviest investments in sovereign AI inside 90 days have been from outside the United States. Korea wired sovereign capital into a homegrown champion. The Gulf rebranded an AWS partnership as a sovereign operating system. India is now putting inference on satellites with a named Indian agentic-AI vendor (Sarvam) and a named Indian Earth-observation vendor (Pixxel). The pattern is unmistakable. The non-US sovereign-AI playbook is moving faster than the multinational vendor map can update. Expect at least two more named ”sovereign inference at the edge” announcements inside twelve months, expect the first regulated buyer to publish a named ground-edge-orbital tiering architecture by Q4, and expect the first global hyperscaler to publish a ”sovereign inference reference architecture” inside two cycles to defend share.

Here's what works: Before the next data-residency review, ask the chief data officer and chief information security officer together: ”for our top three regulated analytical workloads, do we have a named position on ground-edge-orbital tiering, named data-residency commitments per tier, named latency budgets, and a named exit path if a regulated buyer mandates non-hyperscaler inference?” If the answer is ”we run on three ground regions,” that is the project. The Pixxel-Sarvam announcement is the trigger; the named tiering architecture is the deliverable. The CDO who ships it first will absorb the next sovereign inference mandate as a routine review. The one who waits will be writing the architecture under a regulator's deadline.

Say user_id. Get user_id.

Wispr Flow recognizes variable names, file references, and framework syntax mid-dictation. Speak your prompt, get developer-ready text for GitHub, Jira, or your editor. No mangled syntax. Ever.

3. Zyphra And AMD Just Launched An Alternative-GPU AI Cloud On MI355X Silicon, And The Inference Procurement Map Just Got A Named Second Lane

The cleanest compute-architecture signal of the day is sitting on a stocktitan filing most CIO decks will not pick up before the Q3 capacity review. Zyphra and AMD launched a co-branded AI cloud running on AMD Instinct MI355X GPUs, with the explicit pitch that this is a named alternative inference cloud for buyers who want competitive economics outside the dominant GPU stack. Pair it with the Forbes argument that AI adoption is rising while Gen Z trust is declining, and a different operating thesis lands. The ”we standardize on one GPU vendor and one inference cloud” assumption is collapsing in the same window as the trust premium for the dominant stack is fraying. The two pressures are about to renegotiate the inference scorecard at the same time.

The strategic implication is that the chief technology officer's 2026 inference architecture just gained a named second-lane line item. For three years, inference procurement was scored on ”compatibility with the dominant CUDA-class stack” and ”lowest cost per token in that stack.” After the Zyphra-AMD launch, the question is ”for our top three production inference workloads, do we have a named primary lane, a named alternative lane on a competing GPU stack, named portability budgets between lanes, and a named contractual exit clause if pricing or capacity in the dominant lane becomes a single point of failure?” The CTO whose 2026 procurement plan still treats inference as a single-vendor decision is reading from a 2023 capacity model. The CTO who builds a named two-lane procurement architecture, with measured portability cost and a named lane-switch protocol, will absorb the next inference price shock or capacity squeeze as a routine operating event.

The deeper signal is that the inference-compute conversation is being absorbed into the same vocabulary as the sovereign-AI procurement conversation, the lakehouse acquisition conversation, and the edge-deployment conversation. Each one is a named alternative path with a named exit clause and a named portability cost. The CIO who reads the trade press of compute, sovereign capital, lakehouse consolidation, and edge deployment as one shared procurement vocabulary is reading next quarter's variance commentary before it is written. The one who reads each as a separate domain is going to spend the next two cycles consolidating the conversations after the fact.

Here's what works: Before the next inference capacity review, ask the CTO and head of platform engineering together: ”for our top three production inference workloads, do we have a named primary lane, a named alternative lane on a competing GPU stack, a measured portability cost between lanes, and a named lane-switch protocol if the primary lane suffers a price shock or capacity squeeze?” If the answer is ”we standardize on the dominant GPU vendor,” that is the project. The Zyphra-AMD launch is the trigger; the named two-lane procurement architecture is the deliverable. The CTO who ships it before Q3 will absorb the next capacity squeeze as a routine review.

4. Blaize And Winmate Just Inked A $15 Million Edge-AI Partnership For Defense, Border, And Maritime, And The ”Inference At The Edge” Layer Just Got Its First Named Vendor Pair For Hardened Environments

The cleanest defense-and-edge-AI signal of the day is sitting on a marketchameleon wire most enterprise architecture decks have not yet picked up. Blaize Holdings (Nasdaq: BZAI) and Winmate signed a $15 million strategic partnership integrating Blaize AI inference silicon into Winmate rugged computing devices for defense, border security, maritime, and field-medical deployments, structured as a renewable three-year agreement with a sized first-year revenue commitment. Read it as one signal alongside the broader ”where does AI inference happen” conversation, and the third floor of the new operating stack comes into focus. After the data layer (SAP-Dremio) and the orbital layer (Pixxel-Sarvam), the hardened edge layer for environments where connectivity is unreliable or contested just got its first named vendor pair with a numbered contract.

The strategic implication is that the chief operations officer or head of field operations now needs a named position on hardened edge inference. For five years, ”edge AI” was a category most enterprise architecture decks treated as a discount-priced version of cloud AI. After this partnership lands at a named $15 million first-year contract value with a defense, border, and maritime use case, the question is ”for our top three field-operations workloads, do we have a named hardened-edge inference partner, named operational reliability commitments, named data-sovereignty commitments at the edge, and a named upgrade path when the silicon vendor releases the next generation in 18 months?” The COO whose 2026 plan still treats edge as a connectivity backup category is reading from a 2022 model. The COO who builds a named hardened-edge inference architecture with named lifecycle commitments will absorb the next regulated-buyer RFP for field operations as a routine procurement event.

The deeper signal is that ”where the inference happens” is no longer a single-axis question. The inference can sit on a hyperscaler ground region, on a sovereign overlay co-branded by a national fund, on an orbital data centre, or on a Blaize-class hardened edge device under a Winmate-class enclosure inside a vehicle, vessel, or hospital. Four named architectural tiers, each with a named vendor pattern, each with a named procurement clause. The buyer who walks into Q3 with one integrated tiering map, one named owner, and one named portability cost between tiers will run inference procurement at a different cycle from the buyer still treating it as one cloud decision.

Here's what works: Before the next field-operations review, ask the COO and chief technology officer together: ”for our top three field-operations workloads, do we have a named hardened-edge inference partner, named operational-reliability commitments, named data-sovereignty commitments at the edge, and a named silicon-upgrade path inside 18 months?” If the answer is ”we use cloud inference with offline cache,” that is the project. The Blaize-Winmate partnership is the trigger; the named hardened-edge inference architecture is the deliverable. The COO who ships it first will absorb the next regulated field-operations RFP as a routine procurement event.

How Jennifer Aniston’s LolaVie brand grew sales 40% with CTV ads

The DTC beauty category is crowded. To break through, Jennifer Aniston’s brand LolaVie, worked with Roku Ads Manager to easily set up, test, and optimize CTV ad creatives. The campaign helped drive a big lift in sales and customer growth, helping LolaVie break through in the crowded beauty category.

5. Forbes Just Put A Number On The Gen Z AI Trust Decline, And The Adoption-Versus-Trust Curve Just Got Its First Named Crossover Point

The cleanest research-and-trust signal of the day is sitting on a Forbes column most product and marketing decks will skim past as ”consumer sentiment news.” Forbes named the contradiction explicitly: AI adoption is rising while Gen Z trust in AI is declining, and the curve crosses at a point that is going to land on the customer-experience operating committee inside two cycles. Pair it with the thecudaily-CDO Magazine reporting that the biggest barrier to effective AI adoption is not the technology, it is the people, process, and trust dimensions of the organization adopting it, and a different operating thesis lands. The trust premium for AI in customer-facing surfaces is fraying at the same moment the adoption metrics are accelerating. The two curves are going to meet inside the next four operating cycles, and the customer-experience leader who has not named the meeting point already is about to discover it on a quarterly NPS report.

The strategic implication is that the chief customer officer's 2026 plan needs a named Trust-Adoption Crossover line. For three years, customer-experience teams have been measured on ”AI feature deployment velocity” as if adoption alone was the goal. After this Forbes piece and the broader CDO-Magazine roundtable read, the question is ”for our top three customer-facing AI surfaces, do we have a named trust metric per surface, a named adoption metric per surface, a named decision rule for when to slow adoption to defend trust, and a named owner for the crossover signal?” The CCO whose 2026 dashboard still leads with adoption velocity alone is reading from a 2023 KPI sheet. The CCO who builds a named Trust-Adoption Crossover dashboard, with a named decision authority to slow rollouts when the curves are about to cross, will be the one who absorbs the next ”AI is fine, but I do not trust it for this” customer cohort as a routine product decision rather than a brand emergency.

The deeper signal is that the trust dimension is the same operating muscle that the labor-liability dimension named yesterday, the ISO 42001 audit dimension named yesterday, and the named decision-trail dimension JPMorgan executives flagged the week before. Every one of these is a documented chain of named accountability behind a consequential AI decision. Trust is not a vibes metric. Trust is the audit-trail of the customer relationship, written one interaction at a time, and the operating model that survives the next demographic cohort is the one that has named the chain explicitly.

Here's what works: Before the next customer-experience review, ask the chief customer officer and head of product together: ”for our top three customer-facing AI surfaces, do we have a named trust metric per surface, a named adoption metric per surface, a named slow-down rule when trust starts to fall faster than adoption rises, and a named owner of the crossover signal?” If the answer is ”we measure adoption,” that is the project. The Forbes Gen Z piece is the trigger; the named Trust-Adoption Crossover dashboard is the deliverable. The CCO who ships it before Q3 will keep the next AI-in-product decision out of the brand-emergency category.

6. CIO.com Just Argued GenAI Should Be Treated As A Mission-Critical Enterprise App, And The ”Skunkworks Pilot” Era Of GenAI Procurement Just Got A Named Headstone

The cleanest enterprise-architecture signal of the day is sitting on a CIO.com argument most innovation labs will read and quietly ignore. CIO.com made the case that the CIO remit for GenAI should now be the same as for any mission-critical enterprise application: named SLAs, named identity controls, named audit trails, named compliance posture, and named lifecycle ownership. Pair it with the thecudaily roundtable concluding that the biggest barrier to AI adoption is not the technology but the people, process, and trust dimensions, and the operating template sharpens. The era when GenAI sat in an innovation lab with a ”we will figure out the SLA later” exemption from enterprise IT discipline is over. The next 18 months will reward the CIOs who folded GenAI into the named enterprise-application discipline and punish the ones who left it as a parallel skunkworks track.

The strategic implication is that the CIO's 2026 application portfolio just gained a named ”GenAI as Tier-1 application” reclassification line. For two years, GenAI was a special category with its own procurement rules, its own evaluation rubric, and its own exemption from named SLA discipline. After the CIO.com framing, the question is ”for every GenAI surface in production or piloted at scale, do we have a named SLA, named identity controls, named audit trails, a named compliance posture, and a named lifecycle owner, the same way we would for an ERP module or a CRM workflow?” The CIO whose 2026 portfolio still treats GenAI as a parallel innovation track is reading from a 2024 organizational chart. The CIO who reclassifies GenAI as a Tier-1 enterprise application discipline, with named owners and named SLAs, will absorb the next operating-committee challenge from the CFO as a routine portfolio review.

The deeper signal is that the human-process layer is the bottleneck the technology vendors keep underpricing. Every one of the named procurement layers from this week, the lakehouse layer, the orbital layer, the alternative-GPU layer, the hardened-edge layer, the trust-adoption layer, lands or fails on the same question: did the buyer's operating model name the discipline, the owner, and the cadence? The technology is the easy part. The operating model is what makes or breaks the next four review cycles.

Here's what works: Before the next portfolio review, ask the CIO and chief operating officer together: ”for every GenAI surface in production or piloted at scale, do we have a named SLA, named identity controls, named audit trails, a named compliance posture, and a named lifecycle owner, scored on the same rubric as ERP and CRM?” If the honest answer is ”GenAI is a parallel track,” that is the project. The CIO.com framing is the trigger; the named Tier-1 reclassification is the deliverable. The CIO who ships it first runs Q4 from a single application portfolio. The one who waits will be defending parallel tracks to a CFO who has already moved on.

7. Energi.AI Just Acquired Sustainability Advisor Cemasys, And The Carbon-Data-Plus-AI Vertical Just Got Its First Named Operator Roll-Up

The cleanest vertical-AI consolidation signal of the day is sitting on an ESGToday wire most cross-functional architecture decks will not pick up before the next sustainability review. Carbon data platform Energi.AI acquired sustainability advisory Cemasys, folding the advisory delivery layer directly into the data platform layer and creating a named carbon-data-plus-AI operator with consolidated client engagements across Northern Europe. Read it next to the Sia Partners argument that the real-time bank is being rewritten at the intersection of AI, regulation, and competition, and the broader pattern lands. The next phase of AI consolidation is not horizontal ”AI for everything” platforms. It is vertical ”AI plus regulated-domain data plus advisory delivery” operators that own the full client outcome from raw data to regulated report.

The strategic implication is that the chief sustainability officer's 2026 vendor list just gained a named ”carbon-data plus advisory roll-up” line. For two years, carbon data and sustainability advisory were two separate vendor categories on the procurement scorecard. After Energi.AI-Cemasys, the question is ”for our top three regulated sustainability disclosures, do we have a named integrated carbon-data-plus-advisory operator, a named contingency vendor for the same outcome, named data-portability commitments if the integrated vendor is acquired into a larger stack, and a named owner of the procurement decision?” The CSO whose 2026 plan still treats carbon data and advisory as separate procurement lanes is reading from a 2024 vendor map. The CSO who restructures procurement around named integrated operators, with named exit terms, will absorb the next regulated disclosure as routine reporting work.

The deeper signal is that this same vertical-roll-up pattern is going to land in legal-AI, healthcare-AI, banking-AI, and supply-chain-AI inside the next four cycles. Every regulated vertical has a ”data platform plus advisory delivery” operator waiting to be assembled. The procurement teams that have already named the pattern will negotiate from named precedent. The ones that have not will spend the next quarter discovering the consolidation in the news cycle and writing the procurement clause under deadline pressure.

Here's what works: Before the next sustainability vendor review, ask the chief sustainability officer and chief procurement officer together: ”for our top three regulated sustainability disclosures, do we have a named integrated carbon-data-plus-advisory operator, a named contingency vendor, named data-portability commitments, and a named owner of the integrated procurement decision?” If the answer is ”we contract data and advisory separately,” that is the project. The Energi.AI-Cemasys acquisition is the trigger; the named integrated-operator procurement architecture is the deliverable. The CSO who ships it before the next disclosure cycle will close the regulated report on schedule and on budget. The one who does not will discover the consolidation in a procurement-emergency thread.

Signal vs. Noise

Signal vs. Noise

🟢 Signal: Cybersecurity structural influence climbed 91 percent on a 352-article base, Regulatory Compliance influence rose 47 percent on a 452-article base, Machine Learning influence rose 28 percent on a 506-article base, and Data Analytics gained 24 percent of real influence on a 363-article base. Read those four numbers as one shape and the Tuesday-morning operating frame becomes obvious. The conversation has rotated from ”which model wins” into the load-bearing layer underneath: the security posture every new agentic AI surface depends on, the regulatory documentation chain every regulated buyer needs, the machine-learning discipline every operating model rests on, and the analytical layer every business decision sits on top of. Real-world influence rising at all four layers in the same window means the operational center of gravity has shifted from the application tier to the platform tier. The CIO who walks into Wednesday's review with a named owner per layer (security, compliance, ML, analytics) moves two cycles cleaner than the CIO still framing AI as a procurement question for the application layer.

🔴 Noise: Data Management still pulled 327 mentions but lost 1.6 percent of structural influence, Innovation pulled 249 mentions while shedding 2.7 percent, Healthcare pulled 240 mentions while losing 1.3 percent, Data Analysis pulled 232 mentions while losing 16 percent, and Data Integration pulled 228 mentions while losing 21 percent. Each of those five labels is still attached to a flood of vendor announcements; the operational conversation has moved past them as undifferentiated headers. ”Data Integration” has fragmented into the named lakehouse-acquisition layer, the named alternative-GPU lane, and the named hardened-edge tier. ”Innovation” has fragmented into the named CIO-as-Tier-1-portfolio reclassification. ”Data Analysis” has fragmented into the named Decision Intelligence overlay. Procurement filters still keyword-screening on the legacy generic terms are filtering for vendor marketing, not buyer signal. Rebuild the filter around named operating layers and inbound vendor relevance roughly doubles inside two months.

From the 190K

From the 190K

We scanned 190,000 articles this week. Here's what no one is talking about:

The pattern of the day is that the AI stack is being absorbed into named enterprise operating systems at every architectural floor at once, not sequentially: the lakehouse floor (SAP-Dremio), the orbital floor (Pixxel-Sarvam), the GPU-cloud floor (Zyphra-AMD), the hardened-edge floor (Blaize-Winmate), the customer-trust floor (Forbes Gen Z), the enterprise-application discipline floor (CIO.com mission-critical reclassification), and the regulated-vertical operator floor (Energi.AI-Cemasys), all in 18 hours.

Watch the desks separately and you would call this seven unrelated stories. A German enterprise-software vendor acquiring a US data lakehouse. An Indian Earth-observation startup partnering with an Indian agentic AI vendor on space-based inference. A US AI-cloud startup launching on competing GPU silicon. A US edge-AI silicon vendor signing a hardened-device partnership. A trust-and-adoption research piece from a New York consumer publication. An enterprise-IT op-ed from a US trade magazine. A Norwegian carbon-data platform absorbing a Nordic sustainability advisor. Read them as one substrate and the picture sharpens fast. Seven different consolidation patterns, in seven different geographies, in seven different operating dimensions, all renegotiated the multinational vendor's procurement scorecard, application-portfolio map, and operating-model discipline inside the same Tuesday morning. The strategic conversation in Tier-1 boardrooms is still framed as ”buy AI capabilities versus build them.” The actual operating frontier is ”name the floor of the stack, name the owner of that floor, and name the portability cost between floors before the next acquisition redraws the map.”

The operational implication is that the 2026 multinational AI architecture cycle will be won by the firm that consolidates these seven conversations into one named ”Stack-Floor Map,” with one integrated owner per floor, one quarterly cadence, and one integrated dashboard covering the data-platform floor, the orbital-and-edge floor, the alternative-compute floor, the customer-trust floor, the enterprise-application discipline floor, and the regulated-vertical operator floor. The firms that let the seven conversations run in parallel will discover the duplication in the Q4 audit, when the cost of consolidating after the fact is two to three times the cost of consolidating before. The firms that consolidate now will run multinational AI architectures with a single named owner per floor, fewer surprise variances, and a real signature on every consequential procurement decision when the next acquisition lands.

🔍 Below the surface: Here's how you spot real infrastructure: when 794 articles cite Machine Learning with rising structural influence on a foundational basis, 714 cite Regulatory Compliance the same way, and the operating frame quietly shifting both is the move from ”tooling” to ”named operating discipline with named owners per floor of the stack,” that is not a vendor cycle. That is an architectural rewrite. The shift does not show up in any vendor leaderboard. It shows up in the integration patterns, the role redefinitions, and the procurement vocabulary. The trade publications pulling these threads together (the corporate-blog acquisitions desk, the Indian space-tech press, the GPU-financial-newswire desk, the defense-edge-silicon press, the trust-and-research consumer press, the CIO trade press, and the ESG-data acquisitions press) are running a quarter ahead of the Tier-1 analyst houses, which are running two quarters ahead of operating-committee dashboards. The firms that read the trade press of the operating function adjacent to their own are reading next quarter's variance commentary before it is written.

By The Numbers

By The Numbers

- SAP filed an intent to acquire data lakehouse vendor Dremio, folding open-format Iceberg compute and federated lakehouse access under SAP Business Data Cloud — The cleanest single-line reframe of the lakehouse procurement conversation in a year. Drop it on the next platform review and the ”we standardized on the open format” assumption reframes itself in 30 seconds.

- Blaize and Winmate signed a $15 million strategic partnership for hardened edge AI in defense, border, maritime, and field-medical deployments — The first numbered, multi-year edge-AI contract for hardened operating environments to land with named vendor pairing. Field-operations playbooks missing a named hardened-edge inference partner are operating from a 2022 connectivity assumption.

- Google announced a $750 million innovation fund alongside the Gemini Enterprise Agent Platform launch, with nearly 75 percent of Google Cloud customers using its AI products — The hyperscaler ecosystem-funding response to the sovereign-AI overlay pressure. Cloud architecture reviews missing a named position on co-branded agent ecosystems are reading from a 2023 procurement template.

- Cybersecurity structural influence climbed 91 percent week over week on a 352-article base, while Regulatory Compliance influence rose 47 percent and Machine Learning rose 28 percent — The signature of categories that have crossed from undifferentiated header into named operating language at the load-bearing layer of the stack. Procurement filters still keyword-screening on the legacy generic terms are filtering for vendor marketing, not buyer signal.

- The bridge concept ”Data Analysis” appeared across Microsoft Partner Ecosystem, Artificial Intelligence, Private Equity, and AI in Medicine in five different articles in one window, while ”Strategic planning” bridged Regulatory Environment, Litigation, Innovation, and Dispute Resolution — The cleanest leading indicator that the operating frame inside enterprise AI has rotated from ”tool ownership” to ”named decision discipline across operator stacks and across regulated domains.” The CTO whose dashboard still leads with ”AI initiatives” as a single bucket is two cycles behind operator-grade peers.

- The edge-AI market is forecast to grow from $11.8 billion in 2025 to $56.8 billion by 2030, an annual growth rate near 37 percent, according to figures cited in the Blaize-Winmate partnership announcement — The operational read of the week is that the edge layer is not a discount tier. It is a named architectural floor with its own procurement vocabulary, its own vendor pairings, and its own contract size. Most 2026 architecture reviews still treat edge as a connectivity backup category.

- Pixxel and Sarvam announced an AI-powered orbital data centre for India, putting inference and Earth-observation processing on satellites rather than ground-based hyperscaler infrastructure — The first named ”sovereign inference happens off the public hyperscaler grid” architectural commitment in a Tier-1 emerging market. Cloud architecture maps missing a named ground-edge-orbital tiering line are reading from a 2024 residency model.

- Carbon data platform Energi.AI acquired sustainability advisory Cemasys, creating a named integrated carbon-data-plus-advisory operator across Northern Europe — The first named vertical-roll-up in the regulated-sustainability disclosure space. Procurement scorecards still treating carbon data and sustainability advisory as two separate vendor lanes are operating from a 2024 vendor map.

Deep Dive: The AI Stack Just Split Into Named Floors, And Most CIOs Are Still Looking At The Lobby

Deep Dive: The AI Stack Just Split Into Named Floors, And Most CIOs Are Still Looking At The Lobby

Every DJ who has ever played a residency in a multi-floor club knows the moment when the building stops being one room and starts being a stack of rooms with different crowds, different BPMs, and different lighting on each floor. The mainstage downstairs wants the headline. The small room upstairs wants the dub plates. The basement wants the deep cuts. The rooftop wants the curated sunset set. Same building, same DJ, four entirely different sets, four entirely different procurement decisions on which records to bring. That is exactly what Tuesday told us about AI architecture. The building did not just stack a new floor. It revealed that there were already six floors, and the multinationals still booking ”the venue” as one line item are about to discover they have been ignoring four crowds for two years.

The Data-Platform Floor

The SAP-Dremio acquisition is the bass drop on the lakehouse floor. The era of vendor-neutral open-format storage as a permanent moat is closing, and the buyer is now choosing whose enterprise operating system runs the analytical layer of the company. The CDO who walks into the next platform review with a named lakehouse-to-application-stack alignment, named portability budgets, and named exit clauses is the CDO who absorbs the next acquisition as a routine architectural update. The CDO who keeps treating the lakehouse as a horizontal utility is going to spend Q4 retrofitting contract terms under a new owner's roadmap.

The Orbital And Edge Floor

The Pixxel-Sarvam announcement is the snare on the inference-residency floor. The Blaize-Winmate partnership is the hi-hat. Together they say the same thing with two different drum sounds: the question of ”where the inference happens” is no longer a single ground-region choice. It is a named ground-edge-orbital tiering decision with named latency budgets, named data-residency commitments per tier, and named exit paths if a regulated buyer mandates non-hyperscaler inference. The CDO who refactors the residency map around named tiering will absorb the next regulator-mandated architectural change as routine review work. The one who keeps a flat ground-region map will discover the gap in the next regulated-buyer RFP.

The Alternative-Compute Floor

The Zyphra-AMD launch is the vocal hook on the GPU-cloud floor. The line is unmistakable: the inference scorecard now has a named primary lane, a named alternative lane on a competing GPU stack, named portability budgets, and a named lane-switch protocol if the dominant lane suffers a price shock or capacity squeeze. The CTO who builds the named two-lane procurement architecture will absorb the next inference price shock as a routine operating event. The CTO who keeps a single-vendor inference plan will be writing the lane-switch protocol under a CFO budget challenge.

The Customer-Trust And Operating-Discipline Floor

The Forbes Gen Z trust piece and the CIO.com mission-critical reclassification piece are the operating-muscle vocal at the top of the building. The two arguments are the same operating muscle in two different keys. Trust without named SLAs is theatre. Adoption without named trust metrics is acceleration into a brand emergency. The CCO who builds a named Trust-Adoption Crossover dashboard, the CIO who reclassifies GenAI as a Tier-1 enterprise-application discipline, and the CSO who restructures vendor procurement around named integrated operators (Energi.AI-Cemasys pattern) are the three roles that will absorb the next 18 months of cross-functional pressure as routine operating updates.

What Actually Works

- Stand up a Stack-Floor Map with one named owner per floor. CIO, CDO, CTO, COO, CCO, and CSO each own one named floor. One integrated dashboard covering the data-platform floor, the orbital-and-edge floor, the alternative-compute floor, the customer-trust floor, the enterprise-application discipline floor, and the regulated-vertical operator floor. Refreshed monthly. Without a named owner per floor, the stack consolidates into one CIO line item that can never make a real decision.

- Refactor the AI vendor scorecard around named portability cost between floors. Every multi-year AI commitment gets one named portability budget per adjacent floor (data-to-application, ground-to-edge, primary-to-alternative compute), one named lane-switch protocol, and one named cost-to-switch number. Single-vendor standardization is the 2024 assumption.

- Reclassify every production GenAI surface as a Tier-1 enterprise application. Named SLA, named identity controls, named audit trails, named compliance posture, and named lifecycle owner per surface. The CIO.com reclassification framing is the trigger; the named portfolio update is the project. Skunkworks pilots with no SLA discipline are operating debt waiting to land on a CFO desk.

- Build the named Trust-Adoption Crossover dashboard. Every customer-facing AI surface gets a named trust metric, a named adoption metric, a named slow-down rule, and a named owner of the crossover signal. The Forbes Gen Z piece named the question; the named dashboard is the project.

The set list is changing because the building itself just changed shape. The DJ who keeps spinning the unified main-room set (one cloud, one data layer, one inference vendor, one customer-trust assumption, one application portfolio, one vendor procurement scorecard) is going to play to a half-empty mainstage while four crowds upstairs and downstairs are dancing to sets the DJ never booked. The DJ who hears the floor split, names the owners per room, and mixes a different verse for each floor, is the one whose Wednesday morning calendar fills up. The unified-stack set is the support act now. Mix it for the six floors the building already grew.

What's Coming

What's Coming

The First Tier-1 Enterprise To Publish A Named Stack-Floor Map With An Owner Per Floor

The SAP-Dremio acquisition combined with the Pixxel-Sarvam orbital announcement and the Blaize-Winmate edge partnership is going to force a named architectural reshape inside the next two cycles. The next move is the first US or European Tier-1 enterprise to publish a named stack-floor map with one named owner per floor (data, orbital, edge, alternative compute, trust, application discipline, regulated-vertical operator). That announcement is probably one to two quarters out. The CIOs who have already drafted the map will fold the public version in cleanly. The CIOs that have not will be writing the map while the next acquisition redraws the floor plan again.

The First Multinational Bank To Publish A Named Trust-Adoption Crossover Dashboard For Customer-Facing AI

The Forbes Gen Z piece is the trigger. The next move is the first multinational consumer bank or large retailer to publish a named Trust-Adoption Crossover dashboard, with a named trust metric per surface, a named adoption metric per surface, and a named slow-down rule when the curves are about to cross. That announcement is probably one quarter out. The CCOs who have already drafted the dashboard will read the public version with the work already done. The ones that have not will spend the next quarter writing the slow-down rule under a brand-emergency thread.

The First Regulated Buyer To Publish A Named Ground-Edge-Orbital Inference Tiering Architecture

The Pixxel-Sarvam announcement is the trigger. The next move is the first regulated buyer (defense, healthcare, or banking) to publish a named ground-edge-orbital tiering architecture, with named latency budgets per tier, named data-residency commitments per tier, and a named exit path if a regulator mandates non-hyperscaler inference. That announcement is probably one to two quarters out. The CISOs who have already drafted the tiering will absorb the public version as routine architectural alignment. The ones that have not will be writing the tiering under a regulator deadline.

For Your Team

For Your Team

Strategic purpose: Wednesday is the day this week's signals get translated into one integrated Stack-Floor Map before Thursday's architecture review. The work today is not another briefing. It is the conversation that names one signature line per floor of the stack: data platform, orbital and edge, alternative compute, customer trust, enterprise application discipline, and regulated-vertical operator. Everything else is commentary.

Wednesday's meeting prompt: ”If the lakehouse just got acquired into an enterprise application stack, the inference layer just split into ground, edge, and orbital tiers, the GPU cloud just got a named alternative lane, and the trust dimension is fraying at the same speed adoption is rising, who in this room owns the named one-page Stack-Floor Map across our top three multinational architectures, and is that owner one person, or six people who have never been in the same room?”

The Stack-Floor Map Framework:

- One named owner per floor of the stack. CDO owns the data-platform floor, CISO owns the residency-and-edge floor, CTO owns the alternative-compute floor, CCO owns the customer-trust floor, CIO owns the enterprise-application-discipline floor, CSO owns the regulated-vertical operator floor. One page, one cadence, one dashboard. If the six accountability conversations land on three desks with overlapping owners, the framework is not real.

- Named portability cost between adjacent floors on the AI vendor scorecard. Every multi-year AI commitment gets one named portability budget per adjacent floor, one named lane-switch protocol, and one named cost-to-switch number. Single-vendor standardization is the 2024 assumption.

- Named Tier-1 reclassification of every production GenAI surface. SLA, identity controls, audit trails, compliance posture, and lifecycle owner, scored on the same rubric as ERP and CRM. The CIO.com framing named the question; the named reclassification is the deliverable.

- Named Trust-Adoption Crossover dashboard for every customer-facing AI surface. Trust metric, adoption metric, slow-down rule, named owner of the crossover signal. The Forbes Gen Z piece named the trigger; the named dashboard is the project.

- Named integrated-operator vendor lane for every regulated vertical. Carbon, legal, healthcare, banking, supply chain. Integrated data-plus-advisory operator with named exit terms and named contingency vendors. The Energi.AI-Cemasys acquisition named the category; the named lane is the deliverable.

Share-worthy stat: SAP filed to acquire Dremio, Pixxel and Sarvam announced an orbital AI data centre for India, Zyphra and AMD launched an alternative-GPU cloud on MI355X silicon, and Blaize and Winmate signed a $15 million hardened-edge defense partnership inside 18 hours. Drop all four on the next architecture review and the ”we standardize on one cloud and one inference vendor” assumption reframes itself in 30 seconds.

Go deeper: Track the AI stack-floor signals in real time →

The Track of the Day

The Track of the Day

”Our customers can't wait, and they often can't rely on the cloud. They need AI that runs where the work happens.”

— Dinakar Munagala, CEO, Blaize

Today's set: ”Where The Streets Have No Name” by U2, mixed into ”Architecture & Morality” by OMD. Bono named the moment that walked into every multinational architecture review on Tuesday morning, the moment when the inference cannot wait for the named city, the named region, the named cloud, the named ground-based hyperscaler. Sometimes the work happens in a vehicle, in a vessel, in a hospital corridor, on a satellite, on the alternative-GPU lane, in the lakehouse-being-acquired, on the customer-facing surface where Gen Z just slowed adoption to defend trust. OMD named the answer: architecture and morality, in the same breath, in the same dashboard, with a named owner per floor of the building. SAP folding Dremio into the data-platform floor. Pixxel and Sarvam putting inference on satellites for the orbital floor. Zyphra and AMD opening the alternative-compute floor. Blaize and Winmate hardening the edge floor. Forbes naming the trust floor. CIO.com naming the application-discipline floor. Energi.AI absorbing Cemasys on the regulated-vertical floor. The DJ who keeps spinning the unified main-room set is going to play to a half-empty mainstage while four crowds upstairs and downstairs are dancing to sets the DJ never booked. The DJ who hears the floor split, names the owners per room, and mixes a different verse for each floor, is the one whose Wednesday morning calendar fills up.

Yves Mulkers, your data DJ, mixing 190,000 articles into the tracks that actually matter.

We scanned 190,000 articles this week so you don't have to. Data Pains → Business Gains.

Published: May 5, 2026 | Curated by Yves Mulkers @ Ins7ghts

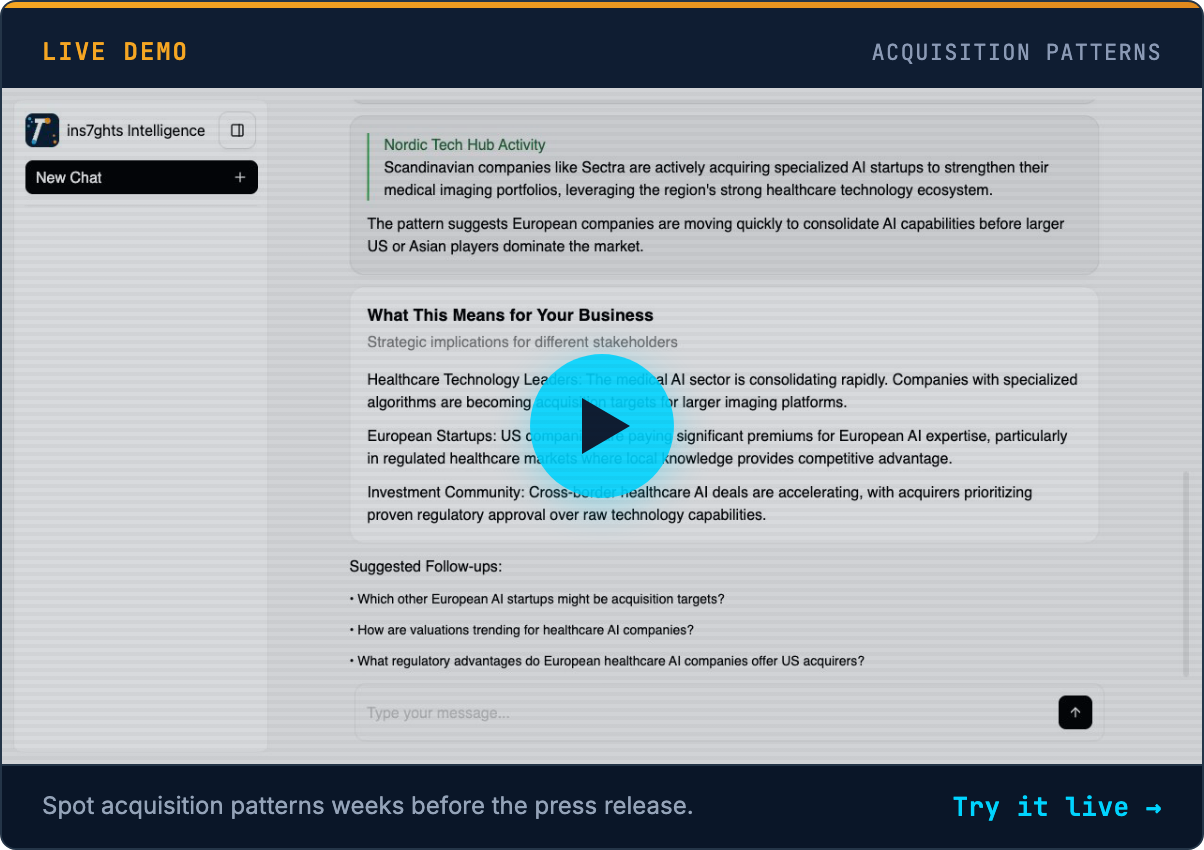

1,300+ articles scanned. 7 stories selected. Our AI distills the noise into signal—in seconds. Get early access →

Know someone who'd find this useful? Share your unique referral link →

Want Your Own AI Intelligence Briefing?

Our platform analyzes 1,000+ sources daily and delivers personalized insights in seconds.

Join the Waitlist →Founding members: Lifetime discount • Priority access • Shape the product