So, What Actually Happened?

So, What Actually Happened?

Saturday morning, and the bassline this week landed on the four floors of the AI stack most procurement teams treat as fixed: the regulatory floor (the rulebook), the workforce floor (who does the work), the compute floor (where the workload runs), and the security floor (what holds it together). Inside seventy-two hours, EU lawmakers struck a deal to soften the AI Act, the Pentagon confirmed 100,000 AI agents already deployed inside federal operations, Anthropic committed $200 billion to Google Cloud for compute and chips, and security researchers named thousands of vibe-coded apps as a live corporate data leak source. We scanned 190,000 articles this week so you don't have to, and the procurement plan you walked into Monday with is not the one you walk out of Friday with.

The Bottom Line: The ”EU AI Act is the compliance ceiling, the workforce is human, the cloud is multi-vendor, and the apps are written by engineers we can audit” assumption stack just had every single floor named in the same week. The CIO who walks into Monday with one named answer per floor (compliance reset posture, AI-agent governance register, single-vendor compute concentration plan, vibe-coded inventory audit) runs the next four cycles from architecture. Everyone else is reading from a 2024 brief.

Join 2M+ Professionals Getting Ahead on AI

Keeping up with AI shouldn't feel like a second job.

But between the new tools, viral posts, and endless hot takes, most people spend hours every week trying to figure out what actually matters.

The Rundown AI fixes that.

It's a free newsletter that gives you the AI news, tools, and tutorials you actually need to know. All in just 5 minutes a day.

Over 2M professionals at companies like Apple, Google, and NASA already read it every morning to stay ahead.

Plus, if you complete the quiz after signing up, they'll recommend the best tools, guides, and courses for your specific job and needs.

The Tracks That Matter

The Tracks That Matter

1. The EU Just Softened Its Own AI Act, And The ”EU AI Act Is The Compliance Ceiling” Posture Just Got Its First Named Reset Cycle

The cleanest regulatory signal of the week is that the EU itself broke the EU AI Act framing most enterprise compliance teams budgeted around. EU lawmakers struck a provisional deal to soften the AI Act on Thursday, walking back high-risk system thresholds, transparency obligations, and parts of the documentation cycle that compliance teams have been building toward for two years. The ”we are racing to be EU AI Act compliant by August” posture that anchored most 2026 governance budgets just got its first named reset signal from Brussels itself.

The strategic implication: the chief compliance officer and head of AI governance just gained a ”regulatory reset cadence” line on the scorecard that did not exist on Monday. For two years, EU AI Act compliance was a one-direction march toward a fixed deadline. After this provisional deal, the question becomes: for our top three production AI workloads, do we have a posture if the high-risk classification moves, a contingency if transparency obligations soften, and a named decision-rights owner if our August roadmap suddenly has six months of slack we did not budget? The opposite risk is also real, the deal is provisional and could be re-tightened by Parliament before final adoption.

The deeper signal is that AI regulation is now bidirectional. For two years it was build-up; this week it became build-up plus walk-back, sometimes in the same quarter. Read alongside the-decoder's read that Europe's answer to AI regulation complexity is to delay most of it, and the operating shape sharpens. The CCO who already drafted a ”regulatory rate-of-change” cadence with named reset triggers absorbs Q3 cleanly. The one running a single-deadline plan will be redrawing the architecture under a Parliament timeline they did not see coming.

Here's what works: Ask the CCO and head of AI governance together: for our top three production AI workloads, do we have a reset posture if the EU AI Act high-risk threshold moves, a delay-scenario plan, and a named owner if the August deadline slides by six months? ”We are on track for August” is not a posture, it is a moving target.

2. The Pentagon Just Confirmed 100,000 AI Agents Already Inside Federal Operations, And The ”AI Workforce Strategy Is A Slide” Frame Just Got Its First Named Federal Operating Stack

The cleanest workforce signal of the week is sitting on a FedGov Today wire most CIOs will read as ”government news.” The Pentagon confirmed 100,000 AI agents already deployed inside Defense operations, with Matthew Cornelius noting that ”agencies must now start treating AI agents much like they treat human employees.” The ”AI workforce strategy is a slide deck for next quarter” frame that has anchored most enterprise HR plans for two years just got its first named federal operating stack at six-figure scale.

The strategic implication: the chief HR officer and the chief AI officer just gained an ”AI agent workforce register” line on the operations scorecard. For two years, AI workforce planning was ”we have a copilot pilot in three teams.” After the Pentagon names 100,000 deployed agents, the question becomes: for our top three workflows, do we have an inventory of which AI agents are operating today, named access controls per agent, an audit trail for agent decisions, and a decision-rights owner if an agent acts outside its scope? Cornelius framed it directly: ”the challenge is no longer simply deploying AI technology, it is determining how humans and AI systems work together.”

The deeper signal is that AI governance is rotating from ”models we license” into ”agents we employ.” The federal government just made the rotation visible at scale. Read alongside Collibra launching an AI Command Center to manage AI agents and Box launching Box Automate to orchestrate agentic workflows, and the operating shape lands. The AI-agent-as-employee model is now in production at one of the world's largest workforces, with named vendors building the management layer around it.

Here's what works: Ask the CHRO and chief AI officer together: for our top three workflows, do we have an agent inventory, named access controls per agent, an audit trail per agent decision, and a named owner if an agent acts outside scope? If the answer is ”we use Copilot,” that is the entire HR-side AI register, and that is the project.

UGC That Drives Conversions — at Scale

minisocial helps top brands produce UGC that converts — top 1% CVR on TikTok, 50% lower cost per add-to-cart, 30%+ ROAS lift. Brands like Plant People, immi, Imperfect Foods, and Topicals are seeing results they couldn't replicate in-house. Create your brief in 10 minutes, approve your curated creators, and download scroll-stopping content. No long-term commitments, no hassle.

3. A Single AI Lab Just Committed $200 Billion To One Cloud Vendor, And The ”Multi-Cloud Is The AI Compute Default” Procurement Frame Just Got Its First Named Concentration Counter-Reference

The cleanest compute-procurement signal of the week is a $200 billion line item nobody had on a 2024 spreadsheet. Anthropic committed $200 billion to Google Cloud for compute and AI chips in a multi-year infrastructure deal that locks one frontier lab into one hyperscaler at a scale roughly equal to the GDP of New Zealand. The ”multi-cloud is the AI compute default and frontier labs spread their risk” assumption that shaped most enterprise compute roadmaps just got its first named single-vendor concentration counter-reference at a number nobody can ignore.

The strategic implication: the CTO and head of cloud strategy for any enterprise running production AI workloads just gained a ”single-vendor compute concentration scenario” line on the procurement scorecard. For two years, cloud-AI procurement was ”spread across at least two hyperscalers, prefer three.” After this commitment lands, the question becomes: for our top three AI workloads, do we have a posture if our preferred frontier lab is locked into a single hyperscaler that is not on our preferred list, a continuity plan if our chosen model becomes a wrapper over a competitor's compute, and a named cost-pass-through analysis if hyperscaler-of-record pricing moves with the lab's commitment cycle?

The deeper signal is that the model layer and the compute layer are starting to lock together at scale. Read alongside an analyst note on the AI inference chip market accelerating alongside the broader AI buildout, and the architectural shape sharpens. The compute floor is consolidating around fewer named lab-cloud pairs, not more. The CTO who already drafted a ”frontier-lab-to-hyperscaler dependency map” can absorb the next vendor review as routine. The one running a ”we use multiple labs across multiple clouds” framing without checking whose compute actually runs whose model will discover the concentration on the next outage post-mortem.

Here's what works: Ask the CTO and head of cloud strategy together: for our top three production AI workloads, do we know which hyperscaler our chosen frontier lab actually runs on, a continuity plan if that pairing locks tighter, and a cost scenario if hyperscaler pricing moves with the lab's commitment cycle? ”We are multi-cloud” is the slide, not the architecture.

4. Security Researchers Just Named Thousands Of Vibe-Coded Apps As A Live Corporate Data Leak Source, And The ”Engineers Wrote It, Engineers Audit It” Posture Just Got Its First Named Generation-Layer Audit Trigger

The cleanest security signal of the week is sitting on a Security Boulevard piece most CIOs will skim past as ”developer news.” Thousands of vibe-coded apps are exposing corporate and personal data, with researchers naming the pattern: AI-generated code shipped without security review, hardcoded credentials in client-side bundles, and personal data leaking through unauthenticated endpoints. The ”our engineers wrote it, our security team audits it” posture that anchored most application security programs for two decades just got its first named audit trigger at the AI-generation layer.

The strategic implication: the CISO and head of application security just gained a ”vibe-coded inventory” line on the controls scorecard that did not exist on Monday. For two years, AI-assisted coding was ”developers use Copilot, we still review the PR.” After the named pattern lands, the question becomes: for our top three product surfaces, do we have an inventory of AI-generated code shipped to production, a named credential-scan cadence on AI-output bundles, a DLP posture for client-side endpoints created by AI tools, and a decision-rights owner if a vibe-coded asset is named in a regulator inquiry? Read alongside ISC2's note that one in three cybersecurity professionals have already encountered AI-powered attacks, and the audit calendar tightens.

The deeper signal is that the application security stack is rotating from ”code written by humans, reviewed by humans” into ”code written by AI, reviewed by AI, with humans named on the audit trail.” The vibe-coded leak pattern is the first operational symptom of the rotation hitting production. The CISO who already drafted a named AI-generation audit cadence absorbs the next security review as a routine evidence pull. The one waiting for a ”specific vibe-coded rule” will be drafting under regulator deadline, with their existing AI-generated code cited as exhibits.

Here's what works: Ask the CISO and head of application security: for our top three product surfaces, do we have a vibe-coded code inventory, a credential-scan cadence on AI-output bundles, a client-side DLP posture, and a named owner inside thirty days of a regulator inquiry? ”Developers review the AI output” is the policy, not the control.

100+ Claude Code hacks to ship code 10X faster

Top engineers at Anthropic say AI now writes 100% of their code.

Are you using AI to write yours?

These 100+ Claude Code hacks show you exactly how. Sign up for The Code and get:

100+ Claude Code hacks — free

The Code newsletter — learn the latest AI tools and skills to code faster in 5 mins a day

5. BASF Just Put AlphaEvolve Into Production On Thousands Of Supply Chain Decisions, And The ”AI Optimization Is A Pilot” Frame Just Got Its First Named Industrial Operating Reference

The cleanest enterprise-deployment signal of the week is buried in a Google Cloud blog most CIOs will read as a vendor case study. BASF moved AlphaEvolve into production to manage thousands of supply chain decisions daily, running optimization across procurement, logistics, and inventory at industrial scale. The ”AI optimization is a pilot in one division” frame that anchored most enterprise AI roadmaps for three years just got its first named industrial operating reference at one of the world's largest chemical companies.

The strategic implication: the chief operating officer and head of supply chain for any industrial enterprise just gained an ”AI-optimization production reference” line on the operations scorecard. For three years, AI in supply chain was ”we ran a pilot last quarter, results inconclusive.” After BASF puts AlphaEvolve on thousands of daily decisions, the question becomes: for our top three operational decision streams, do we have an AI-optimization production reference, a measured savings baseline, a named human-override cadence, and a procurement readiness for the next-generation optimization stack? Read alongside DHL Express introducing AI-powered item identification for international shipping, and the named industrial-AI references stack on the same week.

The deeper signal is that AI in operations is rotating from pilot-with-asterisk into production-with-numbers, with named anchors a board can quote. BASF in chemistry, DHL in logistics, the Pentagon in workforce. Three named operating references at industrial scale inside the same week. The COO who already drafted an AI-optimization scorecard with named production references absorbs the next board review as routine. The one still presenting ”AI exploration phase” will read about a competitor's named savings number on the next earnings call.

Here's what works: Ask the COO and head of supply chain together: for our top three operational decision streams, do we have an AI-optimization production reference, a measured savings baseline, a named override cadence, and a procurement readiness plan? ”We piloted last quarter” is not a reference, it is the absence of one.

6. AI Just Learned To Say ”I'm Not Sure”, And The ”AI Confidence Calibration” R&D Gap Just Got Its First Named Architectural Patch From An Asian Lab

The cleanest research signal of the week is sitting on a EurekAlert release most enterprise CIOs will scroll past as ”academic news.” KAIST researchers published an approach that lets AI models say ”I'm not sure” on their own, reducing the overconfidence that drives the most dangerous failures in autonomous driving, medical diagnosis, and high-stakes decision support. The ”AI confidence calibration is an unsolved R&D problem” frame that anchored most enterprise risk reviews for two years just got its first named architectural patch from a major Asian research lab.

The strategic implication: the CTO and chief risk officer for any enterprise running AI in high-stakes decisions just gained a ”calibrated-uncertainty roadmap” line on the model scorecard. For two years, AI risk planning had a ”models will sometimes confidently produce wrong answers, manage exposure” footnote. After KAIST names the patch, the question becomes: for our top three high-stakes AI workloads (medical, financial, legal, safety-critical), do we have a calibration-aware model selection cadence, an explicit uncertainty threshold per decision tier, and a named human-in-the-loop trigger when the model surfaces ”I'm not sure”? The ”we trust the model output” posture is now a measurable risk.

Here's what works: Ask the CTO and CRO together: for our top three high-stakes AI workloads, do we have a calibrated-uncertainty cadence, a per-tier threshold, and a named escalation trigger when the model says ”I'm not sure”? ”Models can be wrong, we manage it” is no longer the answer.

7. A Live Demo Just Produced 166 Complete Machine-Learning Research Papers, And The ”AI Augments Researchers, Not Replaces Them” Frame Just Got Its First Named End-To-End Counter-Demonstration

The cleanest research-automation signal of the week is sitting on a Conversation explainer most enterprise R&D decks will read as a curiosity piece. Analemma carried out a live demonstration of its Fully Automated Research System producing 166 complete machine-learning research papers end-to-end, from problem formulation through experiment design, analysis, and writeup. The ”AI augments researchers, it does not replace them” frame that anchored most enterprise R&D operating models since 2024 just got its first named counter-demonstration at production volume.

The strategic implication: the chief research officer and head of R&D operations just gained an ”automated-research production scenario” line on the strategy scorecard. For two years, AI in research was ”we use Copilot for boilerplate, scientists do the science.” After Analemma demonstrates 166 papers in a live run, the question becomes: for our top three R&D programs, do we have a posture if a competitor produces ten times our paper output at one tenth the cost, a named quality-validation cadence for AI-generated research, and a decision-rights owner if reviewer time becomes the next R&D bottleneck?

Here's what works: Ask the CRO and head of R&D ops together: for our top three programs, do we have an automated-research scenario, a quality-validation cadence, and a named owner if reviewer throughput becomes the bottleneck? ”Our scientists do the science” is true today, the question is what happens at the next budget cycle.

Signal vs. Noise

Signal vs. Noise

🟢 Signal: Data Governance ownership. Data governance is climbing in real influence on Friday morning while the generic ”AI” header keeps losing ground, a rotation that mirrors how the week landed: the EU softened its AI Act, the Pentagon named 100,000 deployed agents, and Anthropic locked $200 billion into a single cloud. The conversation has rotated from ”do we have an AI policy” into ”who is named on the agent register, the compute concentration plan, and the regulatory reset cadence.” Most coverage still keyword-screens for ”AI” and misses where buying authority actually moved.

🔴 Noise: Generic ”Generative AI” coverage. The undifferentiated ”generative AI” label still pulls volume in the trade press but lost ground this week as enterprise procurement rotated into named operating layers (workforce, compute, regulation, security). Anyone still tracking ”generative AI news” as the top-line signal is reading from a 2024 frame. The signal has moved into who is named on each named layer, not how much ”AI” is in the headline count.

From the 190K

From the 190K

We scanned 190,000 articles this week. Here's what no one's talking about:

The EU softened its own AI Act, the Pentagon named 100,000 AI agents already deployed, and Anthropic locked $200 billion into a single hyperscaler, all in the same seventy-two hours.

Each desk reads these as unrelated stories. The compliance press leads with the EU walk-back. The federal-tech wires write up the Pentagon agent count. The cloud trade press covers the Anthropic-Google compute commitment. Read them on the same morning and a different picture emerges. The regulatory floor that everyone was building toward just moved on the regulators' side. The workforce floor that everyone was treating as theoretical just got named at six-figure scale inside one of the largest employers on earth. And the compute floor that everyone framed as multi-vendor just got a single-vendor counter-reference at a number bigger than most national budgets. Three ”fixed assumption” floors of the AI stack all moved at once, and most enterprise scorecards still treat each floor as someone else's problem.

The strategic move on Monday is naming which of your AI workloads currently has only a 2024 answer for each floor: a single-deadline EU AI Act plan, an empty AI-agent register, and an unmapped frontier-lab-to-hyperscaler dependency. That set, whatever its size, is the next four-cycle priority.

By The Numbers

By The Numbers

-

Anthropic committed $200 billion to Google Cloud for compute and AI chips: The largest named single-vendor AI compute commitment ever disclosed by a frontier lab, locking one model maker into one hyperscaler at a scale roughly equal to the GDP of New Zealand. Multi-cloud AI procurement plans assuming frontier labs spread their risk are operating from a 2024 framework.

-

The Pentagon confirmed 100,000 AI agents already deployed inside Defense operations: The first named six-figure AI-agent workforce inside a single employer, with explicit guidance that agencies must treat agents like human employees on access controls, audit trails, and governance. Enterprise HR plans still treating AI agents as ”next year's pilot” are operating from a 2024 framework.

-

EU lawmakers struck a provisional deal to soften the EU AI Act: The first named walk-back signal on the regulation most enterprise compliance budgets have been racing toward for two years, with named softening on high-risk classifications and transparency obligations. Single-deadline compliance roadmaps are operating from a regulatory frame that the regulator itself just amended.

-

Security researchers named thousands of vibe-coded apps as a live corporate and personal data leak source: The first named AI-generation-layer audit trigger to land on a regulated industry's controls scorecard, with concrete patterns including hardcoded credentials and unauthenticated endpoints. Application security programs still running on ”developers review the AI output” are operating from a 2023 framework.

-

ISC2 reported that 68% of cybersecurity professionals already use or plan to use AI tools, and one in three have encountered AI-powered attacks: The cleanest single-line proof that AI is now both the defender and the attacker on the same controls scorecard. Cybersecurity workforce plans still optimizing for ”hire more analysts” are operating from a pre-AI threat model.

-

BASF moved AlphaEvolve into production on thousands of daily supply chain decisions: The first named industrial-scale AI optimization production reference at a Tier-1 chemical company, with explicit operating numbers a board can quote. Industrial-AI plans still framed as ”pilot in one division” are operating from a 2023 maturity assumption.

-

KAIST published an architectural patch that lets AI models say ”I'm not sure” on their own: The first named overconfidence-calibration patch out of a major Asian research lab, targeting the most dangerous failure mode in autonomous and high-stakes AI. Risk reviews still treating model overconfidence as ”an unsolved problem we manage” are operating from a 2024 frame.

-

See what's rising across the 190K-article corpus this week →

Deep Dive: Four Floors, Same Morning, Different Beats

Deep Dive: Four Floors, Same Morning, Different Beats

Every DJ who has played a multi-floor club knows the building tells you what to mix. The mainstage, the back room, the rooftop, the basement, four floors, four crowds, four beats. Saturday morning, four floors of the AI building all moved at once. The regulatory floor moved (EU softened). The workforce floor moved (Pentagon named 100,000 agents). The compute floor moved (Anthropic locked $200 billion into Google). The security floor moved (vibe-coded apps named as a leak source). Same building, same set list. Entirely different acoustics.

The Regulatory Floor

EU lawmakers softening the AI Act is the snare hit on the compliance floor. The ”we are racing to August 2026 with a fixed deadline” assumption just got a named reset signal from Brussels itself. The chief compliance officer who walks into Monday with a regulatory rate-of-change cadence (named reset triggers, named delay-scenario plan, named decision rights if the deadline slides) absorbs the rest of Q3 cleanly. The CCO who keeps ”we are on track for August” on the line is reading from a brief the regulator itself just amended.

The Workforce Floor

The Pentagon naming 100,000 already-deployed AI agents is the kick drum on the workforce floor. The ”AI workforce strategy is a slide for next quarter” frame just got its first named federal operating stack at six-figure scale. The CHRO who walks into Monday with an AI-agent register (named access controls per agent, named audit trails, named decision-rights owner per scope violation) runs the next four cycles from architecture. The one keeping ”we use Copilot” as the AI workforce answer is treating an entire new employee class as a footnote.

The Compute Floor

Anthropic committing $200 billion to Google Cloud is the bass drop on the compute floor. The ”multi-cloud AI procurement is the default” assumption just got its first named single-vendor counter-reference at a number that resets the procurement floor for the decade. The CTO who walks into Monday with a frontier-lab-to-hyperscaler dependency map (named pairings, continuity plan per pairing, cost scenario per pairing) absorbs the next outage post-mortem as a routine evidence pull. The one running ”we are multi-cloud” without checking whose compute runs whose model will discover the concentration after the fact.

The Security Floor

Security researchers naming vibe-coded apps as a corporate data leak source is the breakdown on the security floor. The ”engineers write code, security audits code” two-decade assumption just got its first named generation-layer audit trigger. The CISO who walks into Monday with a vibe-coded inventory (named credential-scan cadence on AI output, named DLP posture on client-side endpoints, named decision-rights owner inside thirty days of a regulator inquiry) absorbs the next audit cycle as routine. The one waiting for a ”vibe-coded rule” will be drafting under deadline.

What Actually Works

-

Stand up a Four-Floor Map naming the regulatory reset cadence, the AI-agent register, the compute concentration plan, and the vibe-coded audit posture on the same page. Chief compliance officer owns the regulatory floor. Chief HR officer and chief AI officer co-own the workforce floor. Chief technology officer owns the compute floor. CISO owns the security floor. One integrated dashboard. One quarterly cadence. One signature per floor.

-

Refactor the AI vendor scorecard around four named-floor questions, not one general-purpose AI question. Every multi-year AI commitment now needs a regulatory reset clause, an agent-governance attachment, a compute-concentration disclosure, and a vibe-coded-audit posture. The 2024 single-question vendor scorecard broke this week.

-

Build the named compute-concentration scenario before the next CFO budget cycle. The Anthropic-Google commitment priced the line. Frontier-lab-to-hyperscaler dependency maps are not optional for any enterprise running production AI on third-party model APIs.

-

Build the named AI-agent register before the next regulator inquiry. The Pentagon named 100,000 agents publicly. State attorneys general and sector regulators will start asking enterprises to produce their own register inside two cycles. The CHRO and chief AI officer who walk in with the named register absorb the inquiry as a routine evidence pull.

The set list is changing because four floors of the same building moved this morning, and the dancers in the main room have not noticed yet. The DJ who keeps spinning the unified main-room set is going to play to a half-empty mainstage while the back-room crowd, the rooftop crowd, and the basement crowd are dancing to four different beats. The DJ who hears all four floors move and mixes a different verse for each is the one whose Monday morning calendar fills up. The single-floor set is the support act now.

What's Coming

What's Coming

The First Tier-1 Enterprise To Publish A Frontier-Lab-To-Hyperscaler Dependency Map After The $200 Billion Commitment

Anthropic's $200 billion commitment to Google Cloud is the trigger. The next move is the first major Tier-1 enterprise to disclose, inside an analyst day or 10-Q, a named map of which frontier labs they license sit on which hyperscalers and the named continuity plan if a pairing locks tighter. That disclosure is probably one to two cycles out. The CTOs already drafting the map will fold the public version in cleanly.

The First State Attorney-General Or Federal Regulator To Subpoena An AI-Agent Register

The Pentagon's confirmation of 100,000 AI agents already deployed is the trigger. The next move is the first state attorney-general (most likely California, New York, or Illinois) or federal sector regulator (most likely SEC or HHS) to subpoena an enterprise's AI-agent register in a live inquiry. That subpoena is probably one quarter out. The CHROs and chief AI officers who already drafted the register absorb the request as routine evidence. The ones who waited will be drafting under the AG's deadline.

The First Major Enterprise To Disclose A Named Vibe-Coded Inventory After A Public Breach

The vibe-coded app data-leak pattern is the trigger. The next move is the first major enterprise to disclose a named vibe-coded code inventory inside a 10-K risk factor or post-breach notice, naming the AI-generated assets, the credential-scan cadence applied, and the remediation timeline. That disclosure is probably one to two quarters out, and it will arrive after the first vibe-coded breach hits the wires. The CISOs already drafting the inventory absorb the breach as a routine notification cycle. The ones who waited will be drafting the 10-K and the breach notice on the same Sunday.

For Your Team

For Your Team

Strategic purpose: Monday is the day this week's signals get translated into one integrated Four-Floor Map before the next architecture review. The work is one signature line per floor: the regulatory reset cadence, the AI-agent register, the compute concentration plan, and the vibe-coded inventory audit. Everything else is commentary.

Monday's meeting prompt: ”If the EU just softened its own AI Act, the Pentagon just confirmed 100,000 AI agents already at work, a single AI lab just locked $200 billion into one hyperscaler, and security researchers just named vibe-coded apps as a corporate data leak source, who in this room owns the named one-page Four-Floor Map across our top three production AI workloads, and is that owner one person, or four people who have never been in the same room?”

The Four-Floor Framework:

-

One named owner AND one named anchor per floor. Chief compliance officer owns the regulatory reset cadence. Chief HR officer plus chief AI officer co-own the AI-agent register. Chief technology officer owns the compute concentration plan. CISO owns the vibe-coded inventory audit. One dashboard. One cadence. One signature per floor.

-

Named regulatory reset cadence per production AI workload. Every production AI workload operating in the EU or selling into the EU gets a named EU AI Act reset trigger, a named delay-scenario plan, and a decision-rights owner if the August deadline slides by six months. The EU priced the line for you.

-

Named AI-agent register per workflow. Every workflow with a deployed AI agent gets a named inventory entry, named access controls, a named audit trail, and a decision-rights owner if the agent acts outside scope. The Pentagon priced the line for you.

-

Named compute concentration plan per production workload. Every production AI workload running on a third-party model gets a named frontier-lab-to-hyperscaler dependency entry, a named continuity plan, and a named cost scenario if the lab-cloud pairing locks tighter. Anthropic and Google priced the line for you.

-

Named vibe-coded inventory audit per product surface. Every product surface with AI-generated code in production gets a named asset inventory, a named credential-scan cadence, a named client-side DLP posture, and a decision-rights owner inside thirty days of a regulator inquiry. The security researchers priced the line for you.

Share-worthy stat: The EU softened its own AI Act, the Pentagon confirmed 100,000 AI agents already deployed, Anthropic committed $200 billion to a single cloud, and security researchers named thousands of vibe-coded apps as a live data leak source, all inside one week. Drop all four on the next architecture review and the ”we have one compliance plan, one HR plan, one cloud plan, and one security plan, and they all assume 2024” assumption reframes itself in 30 seconds.

Go deeper: Track the AI four-floor signals in real time →

The Track of the Day

The Track of the Day

”Agencies must now start treating AI agents much like they treat human employees.”

Matthew Cornelius, on the Pentagon's 100,000 AI agents already deployed

Today's set: ”Working Class Hero” by John Lennon, mixed into ”I, Robot” by The Alan Parsons Project. Lennon named the labor question every generation has to re-answer. Parsons named the moment the question changes shape. This week, an entire new employee class was named at six-figure scale inside one of the world's largest workforces, and the HR-side governance stack most enterprises kept on a slide is now operational reality somewhere else. The DJ who keeps the workforce question on the slide is playing the support act. The DJ who pulls it onto the operating dashboard is headlining Monday morning.

Yves Mulkers, your data DJ, mixing 190,000 articles into the tracks that actually matter.

We scanned 190,000 articles this week so you don't have to. Data Pains → Business Gains.

Published: May 9, 2026 | Curated by Yves Mulkers @ Ins7ghts

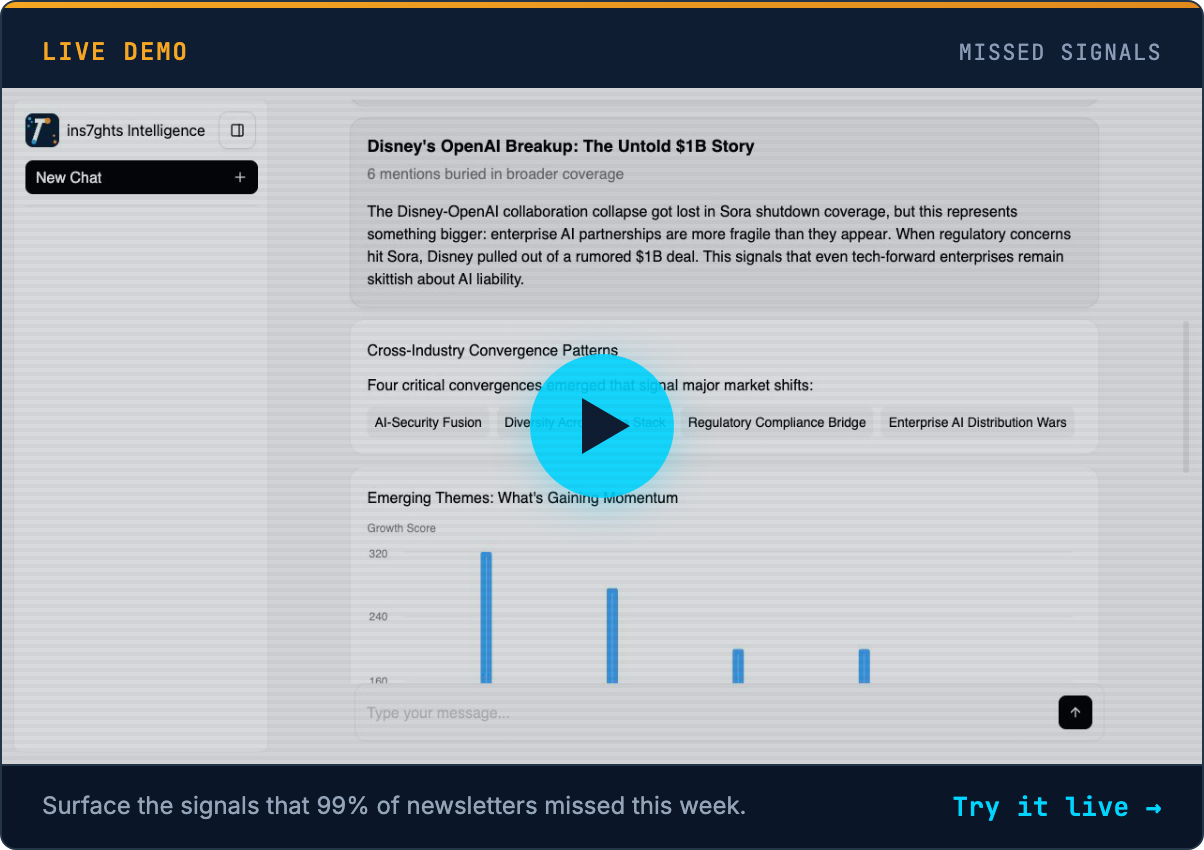

1,300+ articles scanned. 7 stories selected. Our AI distills the noise into signal—in seconds. Get early access →

Know someone who'd find this useful? Share your unique referral link →

Want Your Own AI Intelligence Briefing?

Our platform analyzes 1,000+ sources daily and delivers personalized insights in seconds.

Join the Waitlist →Founding members: Lifetime discount • Priority access • Shape the product