Your daily signal boost from 190,000+ articles, served with a DJ's ear for what actually matters.

So, What Actually Happened?

So, What Actually Happened?

Friday morning, and the bassline is the part of the AI stack everyone treated as ”settled” on Monday: who owns the distribution surface, who supplies the compute, who powers the data center, and who audits the production workload. Inside 24 hours, Apple opened its on-device AI platform to rival models across 2 billion devices, Huawei and DeepSeek locked the Ascend-plus-DeepSeek stack into China's AI self-reliance push on the back of named US export restrictions, NANO Nuclear signed a strategic MOU with Supermicro to power next-generation AI data centers with advanced nuclear energy, and Anthropic locked down all of SpaceX's Colossus 1 compute capacity in a single multi-year compute deal. We scanned 190,000 articles this week so you don't have to, and Thursday's back-wall map (supply chain, policy battlefield, security probe, prudential regulator) just got the front door, the power room, the compute floor, and the distribution layer renamed in the same morning. The walled garden has a named opening. The sovereign-AI stack has a named second sovereign. The data center power room has a named behind-the-meter reactor partner. The frontier-model compute floor has a named aerospace-backed alternative.

The Bottom Line: Thursday named the back wall of the AI building. Friday names the front door, the breaker panel, the compute mezzanine, and the audit map for shadow AI inside the regulated tenants. The CIO who walks into Monday's review with a named distribution-platform posture across iOS, a named sovereign-AI lane outside the US-anchored stack, a named data-center power-source per multi-year capacity commitment, a named non-hyperscaler frontier-compute alternative, and a named shadow-AI inventory line inside the treasury floor runs the next four cycles from architecture. Everyone else is reading the trade press while the auditor drafts the finding.

Stay in flow state. Dictate everything else.

Context switching kills your focus. Every time you stop coding to type a Slack reply, write a ticket, or draft a PR description, it takes 23 minutes to get back in the zone.

Wispr Flow lets you dictate all of it without leaving your editor. Speak your response, your ticket, your commit message — Flow formats it and you're back to coding. Works system-wide inside Cursor, VS Code, Warp, Slack, Linear, and every app.

4x faster than typing. 89% of messages sent with zero edits. Used by engineering teams at OpenAI, Vercel, and Clay.

The Tracks That Matter

The Tracks That Matter

1. Apple Just Opened Its On-Device AI Platform To Rival Models Across 2 Billion Devices, And The ”Walled Garden Will Never Open” AI Distribution Default Just Got Its First Named Crack

The cleanest distribution-platform signal of the day is sitting on a Gurufocus wire that most consumer-tech analysts will read as ”Apple is finally catching up.” Apple opened its on-device AI platform to rival foundation models across more than 2 billion active devices, naming a developer-accessible interface that lets third-party AI providers run inside the Apple operating-system layer rather than as separate apps stacked on top of it. Read it next to the broader pattern of platform-tier AI moves over the past quarter, and a different operating thesis lands. The ”Apple will run a walled garden on AI for at least three years while it builds its own stack” assumption that has shaped every consumer-AI distribution roadmap since the start of 2026 just got its first named operating crack, with 2 billion device-surfaces behind the crack. Every CMO, head of product, and head of mobile whose 2026 distribution plan still treats Apple as a closed AI surface just had the operating geometry redrawn for them inside one Friday morning.

The strategic implication is that the head of product and the head of mobile distribution just gained a named ”iOS-AI distribution lane” line on the go-to-market scorecard, one that did not exist on Thursday. For two years, consumer AI distribution on iOS was a single-vendor exercise with a named set of Apple-controlled features and a named ”wait for Apple Intelligence” footnote. After this Apple Intelligence platform opening, the question becomes ”for our top three AI-powered consumer experiences, do we have a named iOS-platform integration plan, a named alternative-model selector for the user, a named privacy-and-data-residency posture for on-device inference, and a named contingency if Apple narrows the platform terms in a future release?” The CMO whose 2026 plan still names a single AI-on-iOS path is reading from a 2024 distribution map. The CMO who builds a named two-lane iOS-AI distribution model, with a named alternative-model integration and a named lifecycle, will absorb the next platform-policy change as a routine release variance.

The deeper signal is that the platform-tier of the consumer-AI stack just rotated from ”single hyperscaler will own each device family” to ”device-owner controls the surface, model providers compete inside it.” Read Apple's move next to the broader pattern of enterprise AI shifting decisively into deployment phase with compute architectures pivoting toward inference, and the picture sharpens. Inference is moving to the edge, the surface owner controls the runtime, and the model providers are about to compete on quality-per-watt-per-second on a device they do not own. The CMO who builds a named iOS-platform AI integration matrix this quarter walks into Q3 ahead of every team still planning around a single AI-on-iOS vendor.

”Enterprise AI enters deployment phase, shifting compute architectures toward inference.”

— DIGITIMES Report

Here's what works: Before the next consumer-AI roadmap review, ask the head of product and the head of mobile together: ”for our top three AI-powered consumer experiences, do we have a named iOS-platform integration, a named alternative-model selector, a named privacy posture for on-device inference, and a named contingency if platform terms shift?” If the answer is ”we are aligned with Apple Intelligence,” that is the project. The Apple platform opening is the trigger; the named two-lane iOS-AI distribution model is the deliverable.

2. Huawei And DeepSeek Just Locked Down China's AI Self-Reliance Stack As Ascend 950PR Demand Surges On US Export Restrictions, And The ”There Is Only One Inference Supply Chain” Procurement Default Just Got Its Second Named Sovereign Lane

The cleanest sovereign-procurement signal of the day is sitting on a Chosun English wire that most US enterprise procurement teams will skim past as ”China tech news, irrelevant to our 2026 plan.” Huawei launched its latest AI processor Ascend 950PR in March and is seeing increased demand directly tied to US export restrictions on NVIDIA, with DeepSeek-built models running natively on the Ascend stack and the combined offering being positioned as the operating reference for China's AI self-reliance push. Read it next to the broader pattern (Saudi HUMAIN One last week, South Korea's 560-billion-won Upstage commitment last week, South Korea's National Growth Fund now naming Upstage and Rebellion as sovereign-AI bets on Friday), and a clear operating shape lands. The ”there is one global inference supply chain anchored on US silicon, and we will procure from it forever” assumption that has shaped every multinational AI procurement plan since 2023 just got its second named sovereign alternative with a named silicon-and-model pair behind it. The first sovereign lane was the Saudi-AWS HUMAIN One stack. The second is the Huawei-Ascend-DeepSeek stack. The third (Upstage-Rebellion-Korea) is being capitalized in real time.

The strategic implication is that the chief procurement officer and the head of geopolitical risk just gained a named ”sovereign-AI procurement matrix” line on the multi-year capacity scorecard, one with at least three named lanes (US-anchored, China-anchored, named-third-country anchored). For three years, multinational AI procurement was a single-supply-chain exercise. After Friday's Huawei-DeepSeek confirmation plus the Korean fund move, the question is ”for our top three multi-year AI capacity commitments inside regulated jurisdictions, do we have a named sovereign-AI procurement posture per jurisdiction, a named compliance-and-data-residency map per sovereign lane, a named cost-per-inference comparison across the named lanes, and a named contingency if a single sovereign lane gets blocked or sanctioned inside one quarter?” The CPO whose 2026 plan still names a single global supply chain is reading from a 2023 capacity model. The CPO who builds a named three-lane procurement matrix, with a named per-jurisdiction posture, will absorb the next export-control or tariff shock as a routine variance review.

The deeper signal is that the sovereign-AI lane is consolidating fast in three named regional anchors (Middle East, China, East Asia) inside the same window the US-anchored stack is consolidating around three or four named hyperscalers. Five named sovereign anchors in five named regions inside two months. Expect the European sovereign-AI lane to land its first named silicon-plus-model pair inside one quarter. Expect the first major multinational to publicly disclose a sovereign-procurement variance in an SEC filing inside two quarters. The CPOs who already drafted the multi-lane procurement matrix will absorb the cascade as routine. The ones who treated each sovereign announcement as a regional curiosity will be drafting the matrix under their own audit committee's deadline.

Here's what works: Before the next multi-year capacity review, ask the chief procurement officer and the head of geopolitical risk together: ”for our top three multi-year AI capacity commitments, do we have a named sovereign-AI lane per jurisdiction, a named compliance-and-data-residency map, a named cost-per-inference comparison, and a named contingency if one lane gets blocked?” If the answer is ”we are standardized on the US-anchored stack,” that is the project. The Huawei-DeepSeek consolidation plus the Korean fund move are the trigger; the named three-lane procurement matrix is the deliverable.

How Jennifer Aniston’s LolaVie brand grew sales 40% with CTV ads

The DTC beauty category is crowded. To break through, Jennifer Aniston’s brand LolaVie, worked with Roku Ads Manager to easily set up, test, and optimize CTV ad creatives. The campaign helped drive a big lift in sales and customer growth, helping LolaVie break through in the crowded beauty category.

3. NANO Nuclear And Supermicro Just Signed A Strategic MOU To Power Next-Generation AI Data Centers With Advanced Nuclear Energy, And The ”Grid Will Catch Up Eventually” Power Default Just Got Its First Named Behind-The-Meter Reactor Anchor

The cleanest data-center-power signal of the day is sitting on a NANO Nuclear press wire that most CFOs will read as ”speculative nuclear partnership news.” NANO Nuclear Energy signed a strategic memorandum of understanding with Supermicro to power the next generation of AI data centers with advanced nuclear energy, naming an operating model that pairs Supermicro's high-density compute with behind-the-meter advanced-reactor capacity to deliver dedicated zero-carbon baseload to AI workloads. Pair it next to Tuesday's named ”Data Center Power Capacity” surge across the corpus, and a different operating thesis lands. The ”the grid will catch up to AI demand eventually, and we will buy power on the spot market until it does” assumption that has shaped every hyperscaler-and-colocation power plan since 2022 just got its first named behind-the-meter advanced-reactor partnership pairing a named compute vendor with a named reactor builder. The era of ”AI is a power-question we will solve next year” closed inside one Friday morning. The era of ”AI is a power-architecture question we name next quarter” just opened.

The strategic implication is that the head of infrastructure and the head of energy procurement just gained a named ”behind-the-meter zero-carbon baseload” line on the data-center scorecard, with named compute-and-reactor pairing options behind it. For four years, AI data-center power was a grid-spot-market-and-renewable-PPA conversation with a named ”wait for the grid to catch up” footnote. After this NANO-Supermicro MOU, the question is ”for our top three AI compute commitments through 2030, do we have a named behind-the-meter power architecture per multi-year capacity tier, a named zero-carbon baseload partner, a named permitting-and-regulatory posture per jurisdiction, and a named contingency if grid interconnect timelines slip another two years?” The head of infrastructure whose 2026 plan still treats power as ”buy what's available on the spot market” is reading from a 2022 capacity map. The one who builds a named behind-the-meter advanced-reactor line will absorb the next grid-interconnect delay or PPA price spike as a routine power-architecture variance.

The deeper signal is that AI's power-question just rotated from ”the grid is the variable” to ”the reactor is the architecture.” Three converging signatures land in the same window: NANO-Supermicro on Friday, the named ”Data Center Power Capacity” entity surging in the corpus, and the broader story of AI hyperscalers signing nuclear deals with established utilities over the past six months. The CFO who consolidates these three signals into one named ”AI-power-architecture matrix” with a named partner per capacity tier (utility-PPA, behind-the-meter SMR, on-site advanced-reactor MOU) will negotiate Q3's multi-year contracts from a named position. The CFO who reads each as separate vendor news will discover the gap when the next interconnect delay lands during the budget cycle.

Here's what works: Before the next multi-year infrastructure review, ask the head of infrastructure and the head of energy procurement together: ”for our top three AI compute commitments through 2030, do we have a named behind-the-meter power architecture per capacity tier, a named zero-carbon baseload partner, a named permitting posture per jurisdiction, and a named contingency if grid interconnect slips two more years?” If the answer is ”we will buy what's available on the spot market,” that is the project. The NANO-Supermicro MOU is the trigger; the named three-tier AI-power-architecture is the deliverable.

4. Anthropic Just Locked Down All Of SpaceX's Colossus 1 Compute Capacity In One Multi-Year Deal, And The ”Hyperscaler Cloud Is The Only Frontier-Compute Lane” Procurement Default Just Got Its First Named Aerospace-Backed Alternative

The cleanest frontier-compute procurement signal of the day is sitting on a Wall Street Journal wire that most enterprise CIOs will read as ”another Anthropic compute deal.” Anthropic inked a multi-year deal to use all of SpaceX's Colossus 1 AI supercomputer compute capacity, with Anthropic's same-day announcement also naming higher usage limits for Claude inside the deal envelope. Read Anthropic's own framing of the deal as a compute-and-usage-limits expansion, and the operating shape sharpens. The ”frontier-model compute will only ever sit inside the three-or-four named hyperscaler clouds, and our procurement plan can be built around them” assumption that has anchored every Tier-1 frontier-AI capacity plan since 2023 just got its first named aerospace-backed alternative with named multi-year capacity commitment behind it. SpaceX is now a named frontier-compute supplier. The compute floor of the AI stack just gained a named non-hyperscaler entrant inside one Friday morning.

The strategic implication is that the chief AI officer and the head of cloud procurement just gained a named ”non-hyperscaler frontier-compute lane” line on the AI architecture scorecard, with a named multi-year reference deal behind it. For three years, frontier-compute procurement was a three-named-hyperscaler exercise with a named ”wait for the next-generation chip release” footnote. After this Anthropic-SpaceX deal, the question is ”for our top three frontier-model workloads, do we have a named non-hyperscaler compute alternative inside the procurement matrix, a named latency-and-locality posture per alternative, a named contracting-and-exit-clause posture if the aerospace-backed lane scales faster than expected, and a named usage-limits-and-rate-card comparison across all named lanes?” The CAIO whose 2026 plan still names three hyperscaler compute lanes is reading from a 2023 architecture map. The CAIO who builds a named four-lane compute procurement matrix, with a named non-hyperscaler partner and a named usage-limits posture, will absorb the next compute-shortage cycle as routine roadmap variance.

The deeper signal is that the frontier-compute supply tier is fragmenting along non-traditional lines (aerospace-backed compute, sovereign-anchored compute, behind-the-meter reactor-powered compute) at the same speed the foundational-model tier is consolidating around three-or-four named providers. Read the Anthropic-SpaceX deal next to the NANO-Supermicro MOU and the Huawei-DeepSeek consolidation, and the operating signature lands. The compute floor is rewriting itself at three different layers simultaneously (where it sits, who supplies it, and what powers it). The CAIO who consolidates these three signals into one named ”compute-and-power architecture matrix” walks into Q3 with a single signature per layer. The one who reads each as separate vendor news will rebuild the matrix in Q4 with the variance already on the audit committee agenda.

Here's what works: Before the next compute-architecture review, ask the chief AI officer and the head of cloud procurement together: ”for our top three frontier-model workloads, do we have a named non-hyperscaler compute alternative, a named latency-and-locality posture, a named exit clause if the alternative lane scales fast, and a named usage-limits comparison across lanes?” If the answer is ”we are standardized on the three named hyperscalers,” that is the project. The Anthropic-SpaceX Colossus 1 deal is the trigger; the named four-lane compute procurement matrix is the deliverable.

AI ads that look and feel like your brand

Most AI tools fall short because they lack context. They generate in a vacuum.

Hightouch Ad Studio uses your data and brand guidelines to produce high-quality creative. Refresh ads based on performance, react to trends, and respond to competitors instantly.

Less time prompting. More time launching.

5. Forbes Just Named Shadow AI Inside Financial Infrastructure While Compliance Hasn't Caught Up, And The ”We Have An AI Policy” CISO Posture Just Got Its First Named Treasury-Floor Audit Map

The cleanest financial-controls signal of the day is sitting on a Forbes column that most CFOs will treat as ”compliance commentary, not actionable.” Daraabasiita argued in Forbes that shadow AI has reached financial infrastructure (treasury, payments, settlement, regulatory reporting) while named compliance frameworks have not caught up, naming specific gaps in third-party AI tool tracking, model-risk attestation for finance-team-built agents, and audit trails on AI-touched journal entries. Read it next to Thursday's APRA prudential warning, the OECD publishing due diligence guidance for responsible AI, and the EU AI Act enforcement window for high-risk systems opening in August 2026, and the broader audit signature sharpens. The ”we filed the AI policy and the model-inventory, the finance team is covered” CFO posture that most large enterprises adopted in 2024-2025 just got its first named treasury-floor audit map, with named control-gaps behind it. The CFO whose 2026 plan still treats shadow AI as ”an IT-side risk we delegated to the CISO” is reading from a 2024 control taxonomy that just got the first comprehensive 2026 finance-floor audit map drawn on top of it.

The strategic implication is that the chief financial officer and the chief audit executive just gained a named ”shadow-AI inside financial infrastructure” line on the control scorecard, with named gaps inside named workflows (treasury cash forecasting, payments, fraud screening, financial close, regulatory-report drafting). For two years, shadow-AI was an IT discovery exercise with a CISO inventory and a ”we will catch the rest at year-end” footnote. After Forbes plus OECD plus the broader pattern, the question is ”for our top three production AI uses inside the finance function, do we have a named tool-and-agent inventory at the workflow level (not the application level), a named model-risk attestation per finance-team-built agent, a named audit trail per AI-touched journal entry or wire instruction, and a named decision-rights owner inside thirty days of an external auditor inquiry?” The CFO whose 2026 plan still treats this as a CISO question is reading from a 2024 governance map. The CFO who builds a named treasury-floor shadow-AI audit posture will absorb the next external-auditor walk-through as a routine evidence pull.

The deeper signal is that the control conversation around AI just rotated from ”named-application inventory” to ”named-workflow inventory” inside the regulated functions. Expect the first Big Four auditor to publish a named shadow-AI-in-finance audit framework inside one quarter. Expect the first major Tier-1 issuer to disclose a finance-floor AI-controls weakness in a 10-K filing inside two quarters. The CFOs and CAEs who already drafted the named workflow-level posture absorb the cascade as routine evidence pulls. The ones who treat Forbes as ”commentary” will be drafting the posture under the auditor's deadline.

Here's what works: Before the next audit committee meeting, ask the chief financial officer and the chief audit executive together: ”for our top three production AI uses inside the finance function, do we have a named workflow-level tool-and-agent inventory, a named model-risk attestation per finance-team-built agent, a named audit trail per AI-touched journal entry, and a named decision-rights owner inside thirty days of an external-auditor inquiry?” If the answer is ”the CISO has the AI inventory,” that is the project. The Forbes shadow-AI map plus the OECD guidance are the trigger; the named treasury-floor shadow-AI audit posture is the deliverable.

6. OpenBind Just Released The First Public Dataset And Predictive Model For AI-Enabled Drug Discovery Binding Measurements, And The ”Pharma AI Is Locked Behind Pre-Competitive Walls” Frame Just Got Its First Named Open Foundation Layer

The cleanest life-sciences AI signal of the day is sitting on a Eurasia Review wire that most pharma BD teams will skim past as ”academic data release.” OpenBind, a UK-led consortium operationalized through Diamond Light Source and supported by the Department for Science, Innovation and Technology, released its first publicly available dataset of 800 high-quality protein-drug binding measurements alongside an open predictive AI model, with the consortium framing the release as the AlphaFold-equivalent foundation for protein-drug interaction modeling that has not existed until now. The ”pharma AI is locked behind pre-competitive walls and we cannot use anyone else's binding data because everyone hoards it” assumption that has shaped every drug-discovery-AI roadmap since 2021 just got its first named open foundation layer with 800 measurements and a public predictive model behind it. Future tranches are pre-named for COVID-19, malaria, dengue, Zika, and cancer.

The strategic implication is that the chief scientific officer and the head of computational chemistry just gained a named ”open binding-data foundation” line on the discovery scorecard, with a named expansion roadmap and a named consortium operating model behind it. For five years, drug-discovery-AI was an internal-data-and-internal-model conversation with a named ”we cannot use OpenBind-style assets because they don't exist yet” footnote. After Friday's release, the question is ”for our top three discovery programs, do we have a named integration plan with the OpenBind dataset and model, a named overlap analysis between our internal binding library and the OpenBind release, a named contribution-and-IP posture if our team submits future measurements into the open consortium, and a named contingency if a competitor moves first on the open foundation?” The CSO whose 2026 plan still treats binding-data as fully internal is reading from a 2021 data-architecture map. The CSO who builds a named hybrid open-and-internal binding-data architecture will absorb the next discovery-cycle as a real velocity advantage.

The deeper signal is that the open-foundation tier of vertical AI is starting to land in regulated, IP-sensitive industries that everyone assumed would be the last to open. Pharma-binding-data on Friday. Expect the first open-foundation release for clinical-trial endpoint data inside two quarters. Expect the first regulator-backed open-data consortium for diagnostic-imaging benchmarks inside one year. The CSOs who already drafted a named open-foundation integration posture will be operating from a velocity advantage when the next open release lands.

”AlphaFold2 revolutionised protein structure prediction by leveraging decades of experimental data on protein structures in the PDB. The equivalent of such a dataset for protein-drug complexes does not yet exist, but OpenBind aims to create it.”

— OpenBind consortium

Here's what works: Before the next discovery-strategy review, ask the chief scientific officer and the head of computational chemistry together: ”for our top three discovery programs, do we have a named integration plan with the OpenBind dataset and model, a named overlap analysis with our internal library, a named contribution-and-IP posture, and a named contingency if a competitor moves first on the open foundation?” If the answer is ”we use only internal binding data,” that is the project. The OpenBind release is the trigger; the named hybrid open-and-internal binding-data architecture is the deliverable.

7. Tableau Just Went Public About Its Transition As AI Forces BI Vendors To Evolve, And The ”Data Visualization Is A Mature Category” Procurement Default Just Got Its First Named Restructuring Signal

The cleanest BI-vendor signal of the day is sitting on a TechTarget feature that most CDOs will read as ”another vendor analyst piece.” TechTarget reported that Tableau is in active transition as AI forces BI vendors to evolve, with the named transition covering query interfaces, semantic layer ownership, and the relationship between dashboards and conversational AI. Read it next to the broader pattern (Flotek's Q1 showing Data Analytics segment reaching profit parity with the legacy Chemistry Technologies business after 295% year-over-year growth, ServiceNow and Accenture launching a forward-deployed engineering program to scale agentic AI across the enterprise), and the operating shape sharpens. The ”BI is a mature procurement category and our 2026 plan can stay anchored on the same Tableau-or-Power-BI default” assumption that has anchored every CDO scorecard since 2018 just got its first named major-vendor restructuring signal in five years. The semantic layer is rotating ownership. The dashboard is rotating relevance against conversational AI surfaces. The procurement default is rotating with both.

The strategic implication is that the chief data officer and the head of analytics just gained a named ”BI-vendor-transition risk” line on the procurement scorecard, one that did not exist on Thursday morning. For five years, BI procurement was a same-vendor renewal exercise. After Tableau's named transition plus the broader category shift, the question is ”for our top three analytics surfaces, do we have a named conversational-AI integration posture, a named semantic-layer ownership question (in the BI tool, in the warehouse, or in a separate semantic platform), a named contingency if our incumbent vendor is acquired or repositioned inside one quarter, and a named cost-and-license-economics review window if AI-native BI alternatives drop renewal costs by 30 percent or more?” The CDO whose 2026 plan still treats BI as a same-vendor renewal is reading from a 2020 procurement model. The CDO who builds a named transition-aware BI architecture, with a named semantic-layer owner and a named conversational-AI posture, will absorb the next vendor restructuring as a routine renewal review.

The deeper signal is that the data-and-analytics tier of the operating stack is rotating from ”named tool per use case” to ”named semantic discipline plus named conversational surface” inside the same window the foundational-model tier is consolidating. The CDO who reads BI restructuring as ”vendor noise” will spend Q4 explaining a missed renewal-window-savings opportunity to a CFO who has already seen the Flotek-style economics. The CDO who reads it as ”category architecture is rewriting itself” will write the next four quarters' procurement playbook from named position.

Here's what works: Before the next analytics-procurement review, ask the chief data officer and the head of analytics together: ”for our top three analytics surfaces, do we have a named conversational-AI integration posture, a named semantic-layer ownership question, a named contingency if the incumbent vendor restructures, and a named cost-review window if AI-native BI drops renewal costs 30 percent?” If the answer is ”we are renewing Tableau,” that is the project. The Tableau transition is the trigger; the named semantic-plus-conversational BI architecture is the deliverable.

Signal vs. Noise

Signal vs. Noise

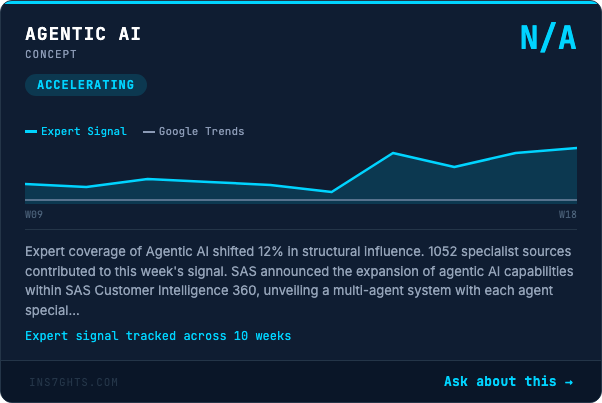

🟢 Signal: Microsoft is the only top-tier organization this morning gaining real-world influence at +109 percent on a 43-article base while mention volume softens around it, paired with Machine Learning the entity gaining +92 percent influence on 59 articles and Data Governance climbing +132 percent in foundational connectivity on 50 articles. Read those three signatures together and the operating frame sharpens. The conversation has rotated from ”which vendor is loudest” into ”which named operating discipline (data governance, machine-learning rigor, named platform integration) is the load-bearing layer.” Real-world influence climbing on Data Governance and Machine Learning the discipline while the loud generic AI labels lose ground means the operating center of gravity has shifted from ”AI tooling chatter” to ”named operating-discipline rigor under regulator and auditor pressure.” The CIO who walks into Monday's review with a named data-governance owner, a named machine-learning model-risk discipline, and a named platform-integration posture across the named operating surfaces moves two cycles cleaner than the CIO still framing AI strategy as a tool-shopping exercise.

🔴 Noise: AWS still pulled 19 mentions today but lost 5 percent of structural influence, AI Governance pulled 15 mentions while shedding 20 percent, and the entire generic ”AI” label has fragmented across 62 mentions while the named operating layers (compute, distribution, power, audit, sovereign) absorbed the actual influence growth. AWS sliding back to noise on a Friday morning is unusual; it tells you the conversation is rotating away from ”which hyperscaler” into ”which compute-and-power architecture per workload.” AI Governance shedding 20 percent of influence on the same morning Forbes named shadow-AI inside financial infrastructure tells you that the operating function has stopped buying the abstract AI-governance pitch and started naming workflow-level controls instead. Procurement filters still keyword-screening on ”AWS,” ”AI Governance,” and the generic ”AI” header are filtering for vendor marketing, not buyer signal. Rebuild the filter around the named operating layers (compute architecture, distribution surface, power source, finance-floor controls) and inbound vendor relevance roughly doubles inside two months.

From the 190K

From the 190K

We scanned 190,000 articles this week. Here's what no one is talking about:

The pattern of the day is that the 24-hour window from Thursday's named back-wall stack into Friday morning has named the front door of the same building: the distribution surface (Apple Intelligence opens), the sovereign procurement lane (Huawei-DeepSeek), the power room (NANO-Supermicro reactor), the compute mezzanine (Anthropic-SpaceX Colossus 1), and the audit map of the regulated tenants (shadow AI inside financial infrastructure). All of it inside the same morning.

Watch the desks separately and you would call this five unrelated stories. A consumer-platform policy update. A China sovereign-tech wire. A small-modular-reactor MOU. A startup-and-aerospace compute deal. A Forbes opinion column. Read them as one substrate and the picture sharpens fast. Tuesday named the seven floors of the AI building and the inspectors of each floor. Thursday named the back wall (supply chain, policy battlefield, security probe, prudential regulator, consumer-protection audit map). Friday names the front door (Apple opens its surface to rivals), the breaker panel (NANO-Supermicro behind-the-meter reactor), the alternative compute mezzanine (Anthropic-SpaceX), the second sovereign procurement lane (Huawei-DeepSeek), and the audit map of the finance-floor tenant (Forbes shadow-AI). The strategic conversation in Tier-1 boardrooms is still framed as ”buy AI capabilities versus build them.” The actual operating frontier is ”name the building, name the inspectors per floor, name the back wall per system, name the front door per surface, name the power source per capacity tier, name the alternative compute lane per workload, name the second-sovereign procurement posture per jurisdiction, and name the workflow-level audit map per regulated tenant before the next inspection redraws the floor plan again.”

The operational implication is that the 2026 multinational AI architecture cycle will be won by the firm that consolidates Tuesday's Stack-Inspector Map AND Thursday's back-wall additions AND Friday's front-door, breaker-panel, compute-mezzanine, second-sovereign, and finance-floor-audit additions into one named ”Stack-Building Map,” with one integrated owner per layer, one named distribution-platform posture per consumer surface, one named power-source per capacity tier, one named non-hyperscaler compute alternative per workload tier, one named sovereign-procurement lane per jurisdiction, and one named workflow-level audit posture per regulated tenant. The firms that let these conversations run in parallel will discover the duplication in the Q4 audit, when the cost of consolidating after the fact is two-to-three times the cost of consolidating before. The firms that consolidate now will run multinational AI architectures with a single named owner per layer, fewer surprise variances, and a real signature on every consequential procurement, distribution, power, compute, sovereign, and audit-facing decision.

🔍 Below the surface: Here's how you spot real infrastructure: when Machine Learning the entity sits on a 500-plus connectivity score across 104 articles as the load-bearing anchor of the corpus this morning, Data Analytics sits on 492 across 89 articles right behind it, Regulatory Compliance sits on 493 across 71 articles in the same load-bearing tier, and the operating frame quietly shifting all three is the move from ”named tool” to ”named operating discipline under named regulators inside named jurisdictions,” that is not a vendor cycle. That is an architectural rewrite under regulatory, procurement, and supply-chain pressure. The shift does not show up in any vendor leaderboard. It shows up in the platform openings, the sovereign procurements, the power MOUs, the non-hyperscaler compute deals, and the finance-floor audit maps. The trade publications pulling these threads together (the consumer-platform analyst wires, the China-tech regional press, the small-modular-reactor industry releases, the WSJ frontier-compute desk, and the regulator-watcher columns) are running a quarter ahead of the Tier-1 analyst houses, which are running two quarters ahead of operating-committee dashboards. The firms reading the trade press of the operating function adjacent to their own are reading next quarter's variance commentary before it is written.

By The Numbers

By The Numbers

- Apple opened its AI platform to rivals across more than 2 billion active devices — The first named operating crack in the Apple AI walled garden, with the largest single-vendor consumer surface in the world behind the crack. Distribution scorecards still treating iOS as a closed AI surface are operating from a 2024 map.

- Anthropic locked all of SpaceX's Colossus 1 compute capacity in one multi-year deal, with same-day higher usage limits for Claude — The cleanest single-line reframe of frontier-compute procurement in a year. The ”three-named-hyperscaler” assumption just got its first named non-hyperscaler alternative with a multi-year reference deal behind it.

- NANO Nuclear and Supermicro signed a strategic MOU to power AI data centers with advanced nuclear energy — The first named compute-vendor-and-reactor-builder pairing for behind-the-meter zero-carbon AI baseload. Power scorecards still treating the grid as the sole AI energy supply are reading from a 2022 capacity model.

- Huawei's Ascend 950PR is seeing demand surges directly tied to US export restrictions on NVIDIA, with DeepSeek models running natively on the stack — The second named sovereign-AI lane in two months, after the Saudi HUMAIN One stack. Procurement matrices still naming a single global supply chain are reading from a 2023 capacity plan.

- Flotek's Q1 2026 reported the Data Analytics segment reaching profit parity with the legacy Chemistry Technologies business, with 295% year-over-year revenue growth and Data-Analytics-as-a-Service service revenue growing 785% since Q1 2025 — The cleanest single-line reframe of the analytics-as-a-product economics in a year. Tabletop ”BI is a feature, not a P&L” assumptions are operating from a 2020 model.

- Microsoft climbed +109 percent in real structural influence on a 43-article base while AWS shed 5 percent on 19 articles, and Data Governance climbed +132 percent in foundational connectivity on 50 articles — The signature of a category that has rotated from ”which hyperscaler is loudest” into ”which named operating discipline is the load-bearing layer.” Procurement filters keyword-screening on ”AWS” and the generic ”AI” header are filtering for vendor marketing, not buyer signal.

- Machine Learning sits on a 500-plus connectivity score across 104 articles as the highest foundational-influence anchor in the corpus, with Data Analytics at 492 across 89 articles and Regulatory Compliance at 493 across 71 articles right behind it — The cleanest leading indicator that the load-bearing tier of the operator stack is the named operating discipline, not the named tool. The CTO whose 2026 dashboard still leads with a single AI bucket is two cycles behind operator-grade peers.

- GDPR pulled 61 article mentions, CCPA pulled 38, HIPAA pulled 37, and the EU AI Act enforcement window for high-risk systems opens in August 2026 — The cleanest leading indicator that the regulatory frame inside enterprise AI has rotated from ”single global compliance posture” into ”named-regulation-by-named-regulation matrix per jurisdiction with a named August-2026 enforcement clock on the EU AI Act.” The CCO whose 2026 plan still names a single compliance posture is two cycles behind regulated-jurisdiction peers.

Deep Dive: The AI Building Just Got Its Front Door, Its Breaker Panel, And Its Compute Mezzanine Named

Deep Dive: The AI Building Just Got Its Front Door, Its Breaker Panel, And Its Compute Mezzanine Named

Every DJ who has played a venue with a security desk on the way in and a regulator who shows up at midnight knows that Thursday's named-back-wall map was only the inspector's view. The full venue runs on the front door (where the audience walks in), the breaker panel (where the lights and the sound stay on), the compute mezzanine (where the show actually gets mixed), and the audit map of the bar (where the licensee posts the regulator's notice). Tuesday named the floors. Thursday named the back wall. Friday names the front door (Apple Intelligence opens), the breaker panel (NANO-Supermicro behind-the-meter reactor), the compute mezzanine (Anthropic-SpaceX Colossus 1), the second-sovereign side entrance (Huawei-DeepSeek-and-Korean-fund stack), and the named audit map of the finance-floor tenant (Forbes shadow-AI). Same set list. Same named floors. Entirely different building.

The Front Door

The Apple-Intelligence-opens-to-rivals announcement is the bass drop on the distribution-surface floor. The era of ”Apple will run a closed AI surface for at least three more years” closed inside one Friday morning. There is now a named developer interface for third-party model providers across more than 2 billion devices. The CMO who walks into Q3 with a named iOS-AI distribution lane, a named alternative-model selector, and a named privacy-and-data-residency posture absorbs the next platform-policy variance as routine release work. The CMO who treats this as ”Apple is finally catching up” will write the catch-up plan as an earnings-call footnote with the variance already on the page.

The Breaker Panel

The NANO-Supermicro MOU is the snare on the power-source floor. The era of ”the grid is the variable” closed not because the grid changed, but because the architecture changed. There is now a named behind-the-meter advanced-reactor pairing with a named compute vendor at the other end. The head of infrastructure who walks into Q3 with a named three-tier power architecture (utility PPA, behind-the-meter SMR, on-site advanced reactor) absorbs the next interconnect delay as routine power-architecture variance. The one who keeps ”spot market” on the line will discover the gap when the next interconnect timeline slips through the budget cycle.

The Compute Mezzanine And The Second-Sovereign Entrance

The Anthropic-SpaceX deal is the kick drum on the alternative-compute floor. The Huawei-DeepSeek consolidation plus the Korean-fund Upstage-and-Rebellion bet is the supporting drum on the second-sovereign floor. The era of ”frontier compute lives only inside three-named hyperscalers, and our procurement plan can be built around them” closed inside one Friday morning. There is now a named aerospace-backed compute alternative and a named non-US-anchored sovereign-AI procurement lane with named silicon-and-model pairs behind both. The CAIO and the CPO who walk into Q3 with a named four-lane compute matrix and a named three-lane sovereign matrix absorb the next compute-shortage cycle and the next export-control shock as routine variance reviews. The ones who keep ”three hyperscalers, one supply chain” on the line will rebuild both matrices in Q4 with the variance already on the audit committee agenda.

What Actually Works

- Run a 90-minute ”Stack-Building Map” exec session — Map every floor of the AI building (distribution surface, sovereign procurement, power source, compute mezzanine, regulator audit map, finance-floor controls). One named owner per floor, one named partner per layer, one named scenario per axis of geopolitical or regulatory risk. The session is the deliverable.

- Name a non-hyperscaler compute alternative inside the procurement matrix — Anthropic-SpaceX is the public reference. Even if you do not buy from a non-hyperscaler today, the matrix needs a named lane so the budget cycle does not catch you flat.

- Build a workflow-level shadow-AI inventory inside the finance function — Tool inventories at the application level miss the spreadsheet macros, the Copilot prompts inside Excel, and the finance-team-built agents on the treasury floor. Workflow-level inventory finds them.

- Name a named behind-the-meter power architecture for every multi-year capacity tier through 2030 — NANO-Supermicro is the trigger. The deliverable is one named partner per tier, one named regulatory posture per jurisdiction, and one named contingency if grid interconnect slips two more years.

The festival is happening whether you bought a ticket or not. The only question is whether you walked in through the front door you named on Friday morning, or whether you are still standing in the parking lot reading the marquee.

What's Coming

What's Coming

The First Major Tier-1 Bank Discloses A Finance-Floor Shadow-AI Controls Weakness In A 10-K Filing Inside Two Quarters

The Forbes shadow-AI map plus the OECD due-diligence guidance plus Thursday's APRA prudential warning is the three-signature set that triggers the first finance-floor disclosure. Watch the Big Four for the named audit framework. Watch the SEC comment letters for the named language.

The First European Sovereign-AI Lane Names Its Silicon-Plus-Model Pair Inside One Quarter

The Huawei-DeepSeek confirmation plus the Korean-fund Upstage-and-Rebellion bet plus the broader Saudi HUMAIN One stack means the European sovereign-AI lane is the conspicuously unnamed entrant. Expect a named European silicon partner and a named European model provider to be paired and capitalized, likely with named state-fund money behind it, before Q3 closes.

The First C-Suite ”Chief AI Innovation Officer” Hire Lands At A Tier-1 US Bank

Westpac just appointed Maggie Shi as Chief AI Innovation Officer, and the broader pattern of named C-suite AI leadership is starting to land in regulated jurisdictions before US peers. Expect the first major US bank to name a Chief AI Innovation Officer (or rename an existing role) inside one quarter, with the explicit operational mandate landing somewhere between the CIO, the CDO, and the chief risk officer.

For Your Team

For Your Team

Strategic purpose: This section drives forwards/shares, proves expertise through actionable frameworks, and funnels readers to the Ins7ghts platform.

Monday's meeting prompt: ”For our top three AI-touched workflows, can we name the distribution surface, the compute supplier, the power source, the sovereign-procurement lane, and the workflow-level audit posture? If any answer is 'we are evaluating,' that is this quarter's project.”

The Stack-Building Framework:

- Front door — Name the distribution surface for every consumer-or-employee AI experience (which platform, which alternative-model selector, which privacy posture). If the answer is ”Apple Intelligence,” name the second lane.

- Breaker panel — Name the power source for every multi-year compute commitment (utility PPA, behind-the-meter SMR, on-site advanced reactor). If the answer is ”the grid,” name the contingency.

- Compute mezzanine — Name the compute supplier for every frontier-model workload (three named hyperscalers, named non-hyperscaler alternative, named sovereign lane). If the answer is ”AWS or Azure,” name the third option.

- Second-sovereign entrance — Name the sovereign-AI procurement lane for every regulated jurisdiction. If the answer is ”we standardize on the US-anchored stack,” name the China-anchored, the Saudi-anchored, and the named-third-country lane.

- Audit map — Name the workflow-level shadow-AI inventory for every regulated function (treasury, claims, prior auth, model risk). If the answer is ”the CISO has the inventory,” name the workflow-level posture.

Share-worthy stat: Anthropic just locked all of SpaceX's Colossus 1 compute capacity in one multi-year deal. Translation: the ”three-named-hyperscaler frontier-compute supply chain” assumption that has anchored every Tier-1 AI procurement plan since 2023 just got its first named non-hyperscaler alternative inside one Friday morning.

Go deeper: Track the named operating layers in real-time →

The Track of the Day

The Track of the Day

”The EU AI Act makes one thing clear: If you cannot observe, explain, and control your AI systems you cannot deploy them at scale.”

— Covasant analysis of EU AI Act Compliance for Autonomous AI Agents in 2026

Same logic applies to the building. If you cannot name the front door, the breaker panel, the compute mezzanine, the second-sovereign entrance, and the workflow-level audit map, you cannot run the building. You can only react to whoever names them first.

We scanned 190,000 articles this week so you don't have to. Data Pains → Business Gains.

Published: May 8, 2026 | Curated by Yves Mulkers @ Ins7ghts

1,300+ articles scanned. 7 stories selected. Our AI distills the noise into signal—in seconds. Get early access →

Know someone who'd find this useful? Share your unique referral link →

Want Your Own AI Intelligence Briefing?

Our platform analyzes 1,000+ sources daily and delivers personalized insights in seconds.

Join the Waitlist →Founding members: Lifetime discount • Priority access • Shape the product